Advantech and D3 package Intel Core Ultra, GMSL cameras and RealSense depth to sharpen AMR vision

By Sophia Chen

Two hardware builders have bundled high-bandwidth cameras, depth sensors and an Intel Core Ultra compute platform into a single off-the-shelf stack for autonomous mobile robots. The package promises 360-degree, low-latency perception with industrial sensors - and forces integrators to rethink thermal, synchronization and software trade-offs.

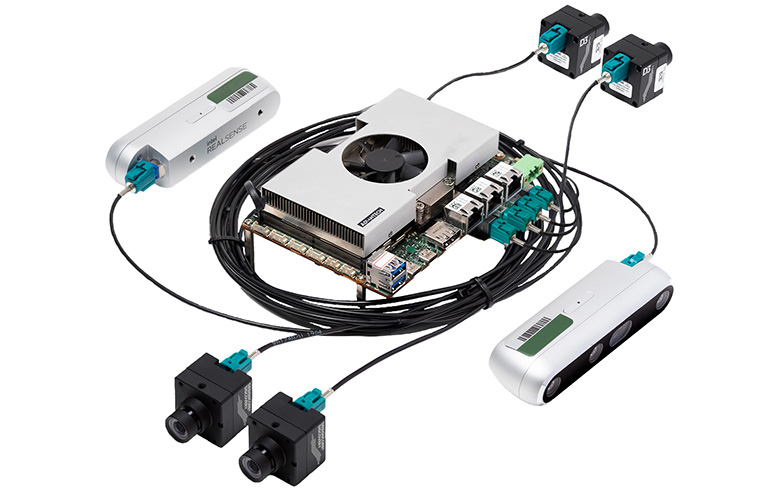

Advantech and D3 Embedded this month announced an integrated vision-and-compute offering that pairs Advantech’s AFE-R360 and AFE-R760 systems, built on Intel’s Core Ultra (Meteor Lake-H/U), with D3’s DesignCore Discovery PRO GMSL camera modules and Intel RealSense depth sensors. The partners say the combination supports up to six GMSL cameras, multiple depth streams and ROS-friendly I/O, enabling AMRs to run higher-rate object detection and mapping at the edge (source: The Robot Report, Nov. 28, 2025: https://www.therobotreport.com/advantech-integrates-compute-with-d3-embedded-sensing-for-mobile-robots/).

An assembly of specific parts, not a black box

Advantech’s smallest board, the AFE-R360, is a 3.5-inch single-board computer that exposes up to eight MIPI-CSI lanes, three LAN ports and three USB-C ports for sensor inputs. With an Advantech camera I/O card it can ingest six GMSL camera streams simultaneously. The AFE-R760 chassis variant supports four GMSL cameras for more conventional, rack-mounted builds (Advantech / The Robot Report).

On the sensing side, D3’s DesignCore Discovery PRO uses the Sony ISX031 sensor in an IP67 housing and adds high dynamic range and LED-flicker mitigation to keep detection pipelines stable under factory lighting. The stack pairs those visible cameras with Intel RealSense Depth D457 stereo cameras for high-bandwidth depth; the combination yields overlapping fields of view and dense point clouds suitable for real-time segmentation and obstacle prediction.

Why integrators should care: latency, coverage and software

Compute comes from Intel’s Core Ultra family - codename Meteor Lake-H/U - whose integrated tile-based GPU and NPU claim up to 32 trillion operations per second of AI throughput in certain configurations. Advantech also supports external MXM GPUs such as Intel Arc or NVIDIA Quadro-class modules when workloads exceed the integrated device’s thermal or latency envelope.

AMRs are no longer judged by path-following alone. Modern fleets need centimeter-scale obstacle awareness, human-intent prediction and on-the-fly re-routing. Feeding six 2MP-12MP visual streams plus depth at 30-60 Hz into neural perception stacks demands low-latency I/O and on-board fusion to avoid the round-trip delays of cloud processing.

Engineering trade-offs: power, thermal and failure modes

Advantech’s multi-lane MIPI-CSI and GMSL support addresses the physical I/O bottleneck; RealSense provides stereo disparity at frame rates that complement the visible cameras. Crucially, Advantech exposes camera data via ROS nodes in its Robotic Suite, lowering the software lift for teams that already run ROS 2 perception pipelines.

However, throughput is only half the battle. Synchronizing GMSL streams, maintaining exposure across HDR scenes and mitigating LED-flicker are operational tasks. Sandy Chen, senior director of Advantech North America, told The Robot Report, “At Advantech, we are devoted to enabling more robots in more scenarios,” which implies a focus on easing sensor onboarding as much as raw sensor count.

Who stands to win and where this fits on the TRL ladder

Packing a Core Ultra chip and six camera feeds into a mobile base forces designers to juggle power budgets and heat. Intel’s Meteor Lake-H/U improves performance-per-watt versus prior generations, but sustained multi-camera inference will still push thermal limits on small chassis. That trade-off drives two practical choices: throttle models to maintain safe junction temperatures, or move heavy batches of inference to an MXM GPU or edge server.

Camera-level reliability matters in industrial spaces. IP67 housings and Sony’s ISX031 optical stack reduce ingress and glare failures, but a single mis-synced camera can produce artifacts that downstream trackers interpret as obstacles. The partners emphasize validation and ODM support; Scott Reardon, CEO of D3 Embedded, said the collaboration helps customers “get their unique products to market faster and deploy edge solutions at scale.”

Safety envelopes must be re-specified when perception changes. Faster detection can shorten braking distances and allow closer human-robot coexistence, but it also requires re-certifying systems for emergency-stop response and functional-safety standards such as ISO 13849 or ISO 26262-adjacent practices in mobile robotics.

Who stands to win and where this fits on the TRL ladder

Sources

- Advantech integrates compute with D3 Embedded sensing for mobile robots - The Robot Report, 2025-11-28

- AFE-R360 product page - Advantech, 2025-01-01

- DesignCore Discovery PRO Series - D3 Embedded, 2025-01-01

- Intel RealSense Depth Camera D457 - Intel RealSense, 2024-06-01