Age Verification Rules Threaten Privacy

By Jordan Vale

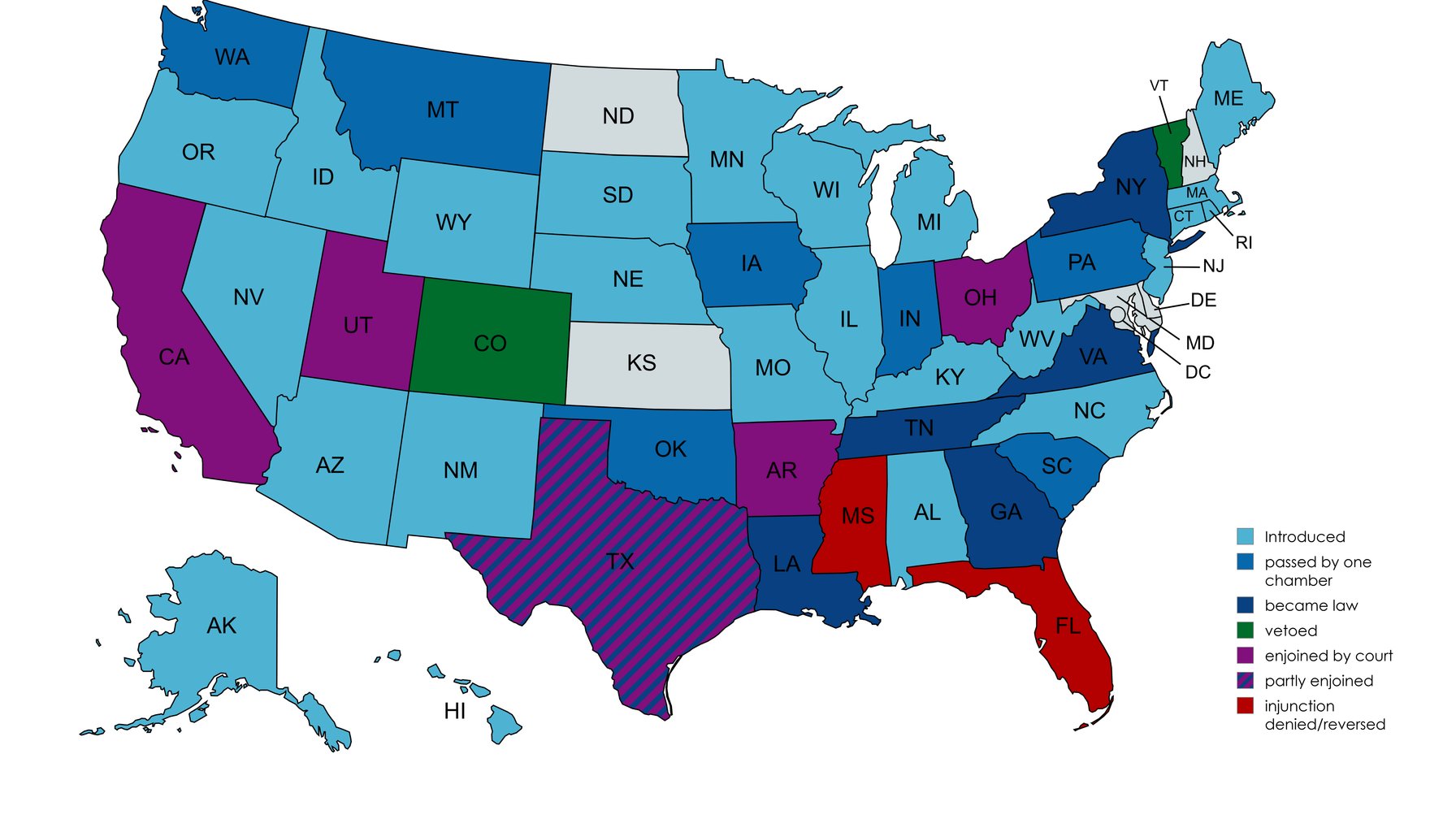

Image / Wikipedia - Social media age verification laws in the United States

Your ID could become your passport to the web.

A new wave of age verification laws aims to gate online content by confirming users’ ages, but civil-liberties advocates say the price is paid in privacy and free expression. The latest EFFector issue highlights how platforms like Discord experimented with mandatory age checks and how a leaked Meta memo on face-scanning technology signals broader, often opaque, stakes in digital identity. The core argument: once you require people to prove how old they are, you’re really designating a data harvest—not just a one-time check.

The logic of age gates sounds simple: ensure minors can’t access content deemed inappropriate, while adults can. In practice, the mechanisms are fraught. ID-based verification creates a centralized trail of personal data that can be mined, leaked, or repurposed. The same systems that confirm a user’s age can confirm other sensitive attributes or enable cross-service tracking, turning a quick login into a long-term dossier. And when age gates rely on biometric verification or facial recognition, the privacy harms multiply: even accidental data sharing can enable future profiling, matchmaking with advertisers, or easier id theft.

The sticker shock goes beyond privacy. Age verification can chill speech, especially for younger users, marginalized communities, or people without straightforward government IDs. If disclosure of age becomes a prerequisite for participation, some voices may stay off platforms altogether or be forced into less-regulated corners of the web. The EFF’s reporting underscores this tension: the goal is safety and compliance, but the consequence can be diminished visibility for legitimate expression and a more winner-take-all online environment where only those who can navigate or afford verification survive.

From a product and operations standpoint, the challenge is real. Implementing robust age checks is not a one-off feature; it reshapes onboarding, data contracts, and risk management. If a platform relies on third-party identity providers, it inherits their vulnerabilities and incident response timelines. If it stores any age-related data even briefly, it faces retention obligations and potential exposure in a breach. And if the system is not designed to anonymize or minimize data, it creates a surveillance-by-default posture that can outlive the policy intent.

Two to four practitioner-ready takeaways emerge from the discussion, grounded in current industry dynamics:

The debate over online age verification isn’t a technocratic detail; it’s a test of how much of the online world we’re willing to trade for safety. If the price is a user’s most sensitive data stored by a growing set of actors, it may be too high a price for communities that rely on open, expressive digital spaces—and for the people who already bear the burden of digital surveillance in daily life.

Sources

Newsletter

The Robotics Briefing

Weekly intelligence on automation, regulation, and investment trends - crafted for operators, researchers, and policy leaders.

No spam. Unsubscribe anytime. Read our privacy policy for details.