AI Growth Accelerates on Three Compute Levers

By Alexander Cole

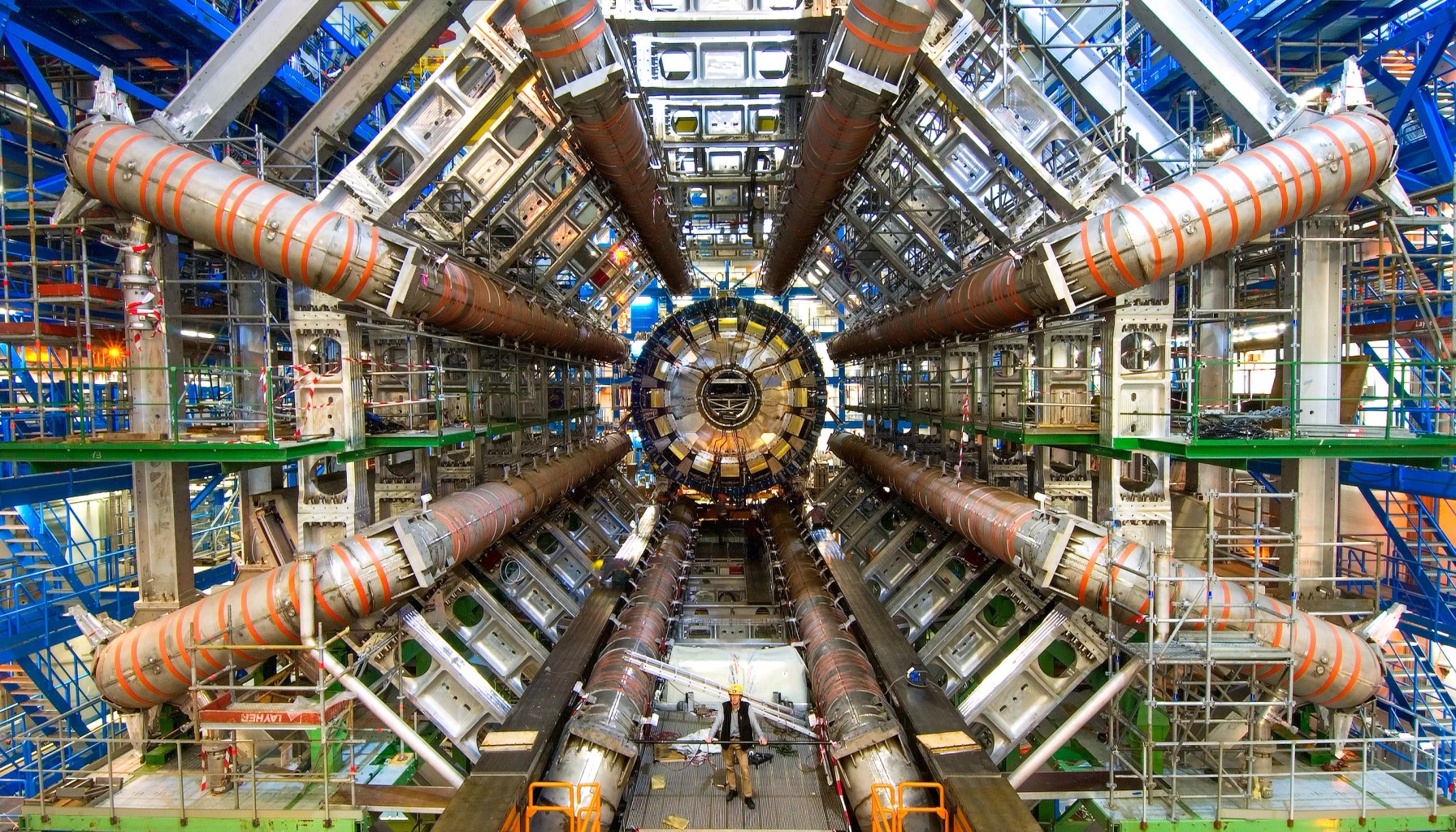

Image / technologyreview.com

AI growth isn’t hitting a wall—it's turbocharged by hardware.

The take from The Download, MIT Technology Review’s weekday tech briefing, centers on Mustafa Suleyman’s argument that the so-called “development wall” for AI is unlikely to appear soon. Suleyman, a prominent AI executive (Microsoft AI CEO and Google DeepMind co-founder), frames the trajectory around three hardware-and-architecture accelerators that turn brute compute into real progress: faster basic calculators, high-bandwidth memory, and technologies that knit many GPUs into a single, colossal supercomputer.

First, faster basic calculators—more raw FLOPs with smarter efficiency—drive the pace of model training and iteration. The message isn’t just “more GPUs,” but “better CPUs, smarter accelerators, and software that extract more work per watt.” In practical terms, teams can push bigger models and more complex training regimes without endlessly escalating costs, provided they pair it with software optimizations that keep the compute utilization high.

Second, high-bandwidth memory (HBM) and related memory technologies. Memory bandwidth has long been a bottleneck in training and inference, especially for large transformers and other data-hungry architectures. Suleyman’s view is that advances in memory sit alongside faster compute to unlock previously unreachable scales. For practitioners, this means memory-efficient model design, careful data pipeline planning, and a willingness to exploit memory-centric training techniques can unlock more value from the same hardware budget.

Third, interconnect technologies that turn dispersed GPUs into enormous supercomputers. The idea is not just clusters of GPUs, but high-speed interconnects and software stacks that allow many accelerators to behave as a unified engine. This creates larger effective models and broader parallelism without linear increases in wall-clock time. The practical upshot is that teams can scale both model size and data volume more aggressively, as long as their infrastructure can keep the GPUs in sync without becoming a network bottleneck.

Taken together, the piece argues that exponential AI progress is less about a single breakthrough and more about a trio of hardware-software ecosystems maturing in concert. It’s a narrative that resonates with product teams and startups racing to deploy larger, smarter models: the bottleneck is increasingly about getting the right compute+memory+connectivity mix, not simply buying more GPUs.

For those shipping in the current quarter, a few practitioner takeaways emerge. First, expect compute budgets to dominate cost discussions as models scale; plan for scalable memory and robust data pipelines upfront. Second, invest in accelerator-aware software and memory-efficient training techniques—sparsity, quantization, and smarter data flows can lift throughput without proportional cost jumps. Third, align cloud and on-prem strategy to access large, fast interconnects; network bottlenecks can erase gains from better GPUs. Finally, keep an eye on energy and cooling as you grow; hardware efficiency and thermals become a competitive differentiator in practice, not just theory.

Analogy time: imagine stitching together a fleet of high-performance cars with a highway system that’s twice as wide and twice as fast. The power isn’t just in the engines, but in the road and the traffic control that lets dozens of cars move as one. That’s the essence of Suleyman’s thesis—turning a constellation of GPUs into a single, massive learn-and-infer engine through smarter memory and faster interconnects.

Limitations and caveats are real. Hardware progress can outpace the software and data efficiency needed to extract value, and supply chains, energy costs, and regulatory scrutiny could temper the pace. Not every upgrade yields proportional gains, especially if data, alignment, or governance issues aren’t kept in check. For startups racing to ship this quarter, the prudent path is to pair aggressive scale plans with disciplined memory management, cost-aware architecture design, and a clear plan for sustainable compute growth.

Sources

Newsletter

The Robotics Briefing

Weekly intelligence on automation, regulation, and investment trends - crafted for operators, researchers, and policy leaders.

No spam. Unsubscribe anytime. Read our privacy policy for details.