DeepSeek V4 Delivers Open World Models on Ascend Chips

By Alexander Cole

Image / technologyreview.com

DeepSeek’s V4 nails long-form world modeling and runs on Huawei Ascend hardware, a notable turn in the AI hardware race.

On Friday, DeepSeek released a preview of V4, its long-awaited flagship model. The company says the new design lets the model process much longer prompts, handling large swaths of text more efficiently than prior generations. Crucially, the model remains open source. Coverage suggests its performance now matches leading closed source rivals from Anthropic, OpenAI, and Google. It is also DeepSeek’s first release optimized for Huawei’s Ascend chips, a strategic move that tests China’s reliance on Nvidia for accelerators.

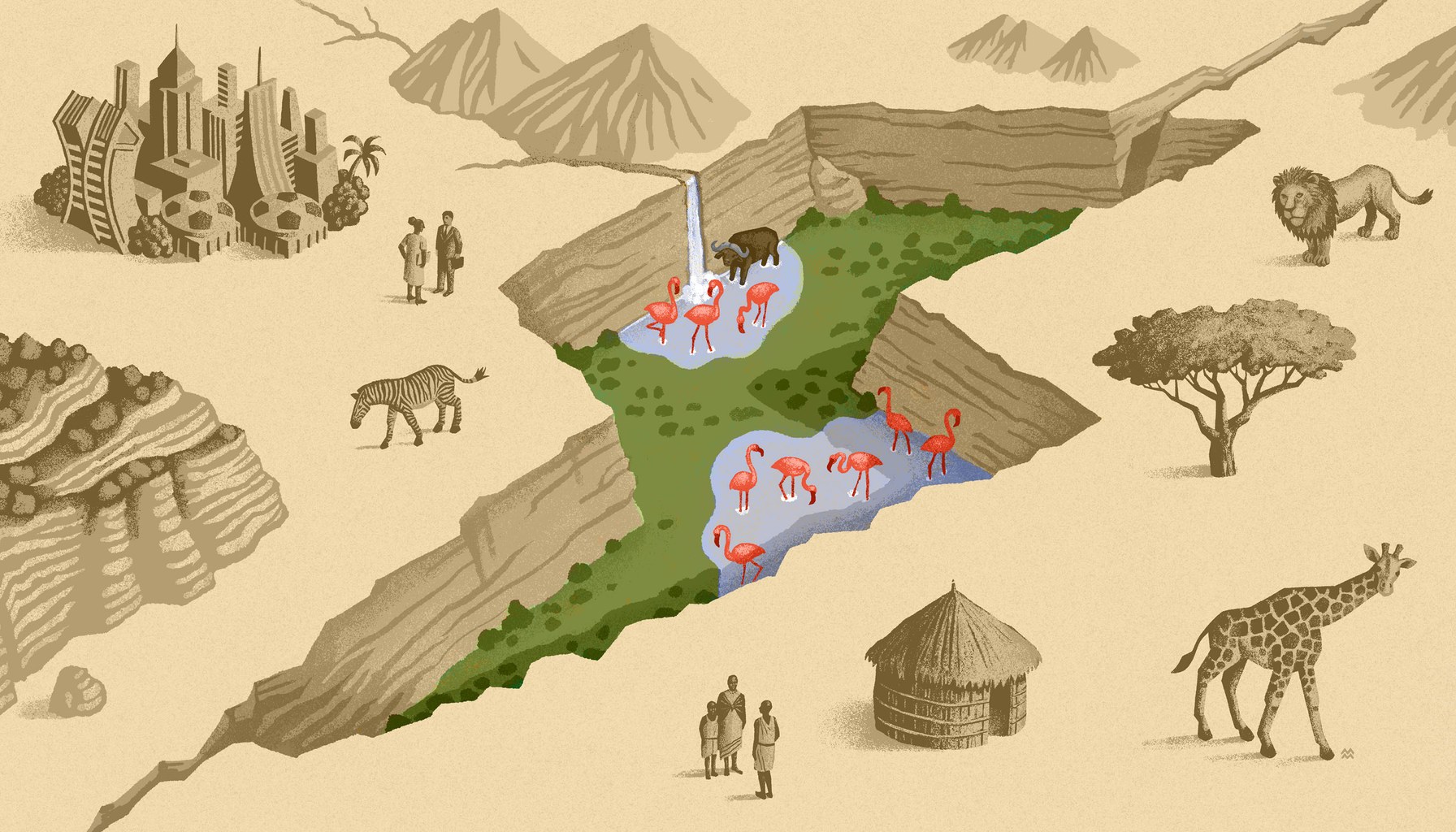

The paper frames world models as a path to bridging language models with the physical world. In this view, a world model stores and reasons about a broad, persistent representation of tasks and environments, enabling planning and coordinated action beyond what a text-only model can do. The discourse around world models has grown influential, with figures like Fei-Fei Li and Yann LeCun championing the idea that intelligent agents need more than short memory and next-token prediction to reliably navigate real tasks, including robotics and dynamic coding environments.

From a product and industry perspective, the move matters for several reasons. First, DeepSeek’s insistence on open sourcing V4 lowers the barrier to iteration for smaller teams that want to experiment with world-model capabilities without bidding into expensive vendor stacks. Second, by advertising parity with top closed models, the company signals that open architectures can compete on the same performance plane, at least in targeted benchmarks that the piece describes. Third, the Ascend optimization suggests a broader industry shift: while Nvidia GPUs still dominate, regional players are testing co processors tailored to specific workloads, and open models can serve as a proving ground for such hardware ecosystems.

For engineers and product people, two practical takeaways stand out. Long-context processing opens doors for complex workflows that benefit from planning and multi-step reasoning, such as code generation with long-term project memory or multi-document synthesis that preserves context across chapters or modules. If you’re evaluating a world-model stack, expect to weigh the improvement in context window against hardware availability; DeepSeek’s Ascend focus means strong performance when running on that hardware, but adoption may hinge on how easily cloud providers and edge devices can support it alongside Nvidia-based infrastructure. Finally, the open-source angle invites scrutiny of reproducibility and governance: can communities reproduce the exact prompts, data, and evaluation conditions needed to claim parity with closed rivals?

The article does flag limitations and uncertainties. Specific benchmark datasets and numeric scores are not detailed, and the claim of parity rests on a broader competitive narrative rather than granular numbers disclosed publicly. Real-world deployments will still wrestle with latency, energy use, and the reliability of long-horizon planning in messy environments. In addition, the Ascend dependency introduces a potential chokepoint: if the ecosystem for these chips isn’t as widespread as Nvidia’s, some teams may struggle to ship at scale without hardware affinity or cloud support.

For teams shipping this quarter, the news points toward a broader trend: open world models are inching closer to production viability, particularly for tasks that require memory and planning over longer horizons. Expect more startups to experiment with long-context agents, and expect cloud and hardware platforms to respond with more configurable stacks that balance open models with accelerators. If you’re building features that hinge on “thinking ahead” rather than only predicting the next token, V4 is a signal to start prototyping now, even if you still need to navigate hardware availability and benchmark transparency.

Sources

Newsletter

The Robotics Briefing

A daily front-page digest delivered around noon Central Time, with the strongest headlines linked straight into the full stories.

No spam. Unsubscribe anytime. Read our privacy policy for details.