Sovereign AI Gap Riles Global Partners

By Jordan Vale

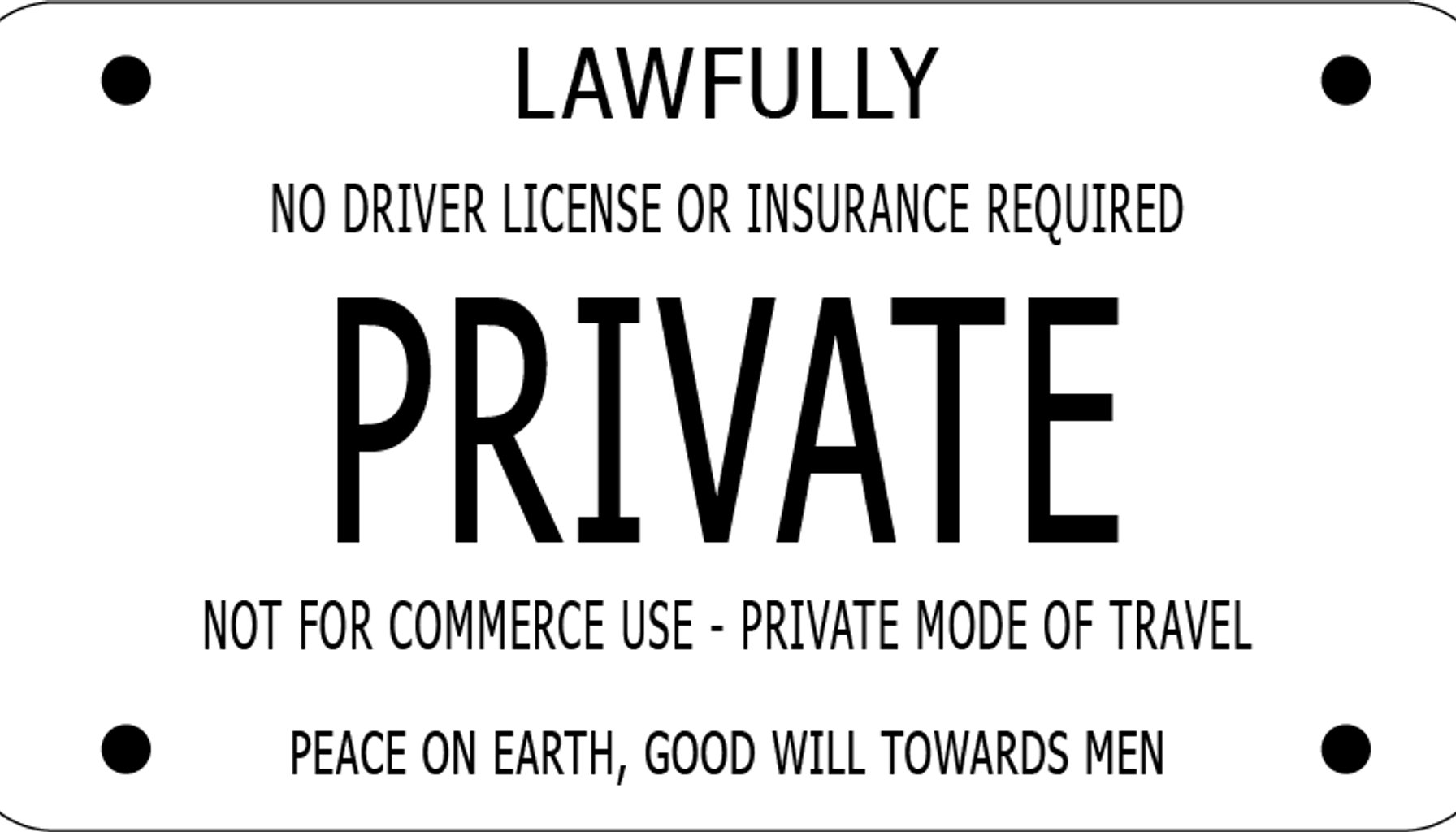

Image / Wikipedia - Sovereign citizen movement

The sovereignty gap in U.S. AI statecraft just got bigger.

Washington’s push to export “sovereign AI”—offering partners deployment control using American technology—has collided with a wave of other nations pursuing IT sovereignty to curb reliance on U.S. policy discretion. In a pointed Lawfare-op-ed, Center for Security and Emerging Technology analyst Pablo Chavez argues the central question isn’t whether Washington can sell more AI, but whether those sales come with an “assurance layer” that actually reduces uncertainty for partners.

The core idea, Chavez writes, is to attach governance guarantees to American AI stacks so foreign governments can trust that deployments meet local laws, security norms, and data rules without sacrificing the benefits of U.S. software. Policy documents show that the United States wants to offer deployment control—edge and on-prem capabilities, audit rights, and update governance—while preserving access to American innovation and the security guarantees that come with U.S. export controls and supplier ecosystems. The result, Chavez suggests, is a delicate bargain: access and interoperability on one side, sovereignty and policy autonomy on the other.

But many countries aren’t inclined to trust incentives alone. They are pursuing sovereignty not just to gain greater control, but to minimize exposure to sudden shifts in U.S. policy discretion, and to align AI systems with local privacy regimes, procurement rules, and national security postures. The gap, in other words, is not just about where code runs, but about who sets rules, who bears the risk, and who profits when things go wrong. If the assurance layer is thin or ill-defined, partners may hedge by building parallel, homegrown AI stacks or by insisting on stricter local data residency and governance requirements—pathways that can reduce the scale and speed of transatlantic AI collaboration.

From a practitioner perspective, several tensions emerge. First, a credible assurance layer requires rigorous commitments on updates, data handling, and incident response that survive political shifts and commercial renegotiation—yet enforcement mechanisms across borders remain murky. Second, the tradeoff between supplier leverage and partner autonomy is real: granting deployment control may preserve access to cutting-edge models, but it risks entrenching U.S. tech leverage in ways that others view as strategic dependence. Third, fragmentation looms as different countries demand bespoke governance overlays, potentially multiplying compliance costs for multinational users and complicating interoperability across markets. Finally, as nations accelerate domestic AI agendas, the U.S. may need to offer more transparent standards, shared risk models, and predictable licensing to keep its ecosystem attractive.

What to watch next is instructive. Expect further diplomacy around security assurances, data governance, and cross-border incident response protocols that would anchor a practical assurance layer. Watch for how Europe, in particular, translates sovereignty rhetoric into procurement rules and regulatory alignment that could push U.S. partners toward broader autonomy. And keep an eye on whether Washington can couple its technical advantages with credible, enforceable guarantees that reassure partners without eroding the mutual benefits of collaboration.

This is not a choice between “more sovereignty” or “more American tech.” It is about designing a credible, durable bridge between the two—one that makes sovereign AI feel like a shared asset, not a risk riders on a single policy wheel.

Sources

Newsletter

The Robotics Briefing

Weekly intelligence on automation, regulation, and investment trends - crafted for operators, researchers, and policy leaders.

No spam. Unsubscribe anytime. Read our privacy policy for details.