Sovereign AI Gap Stirs U.S. Statecraft Debate

By Jordan Vale

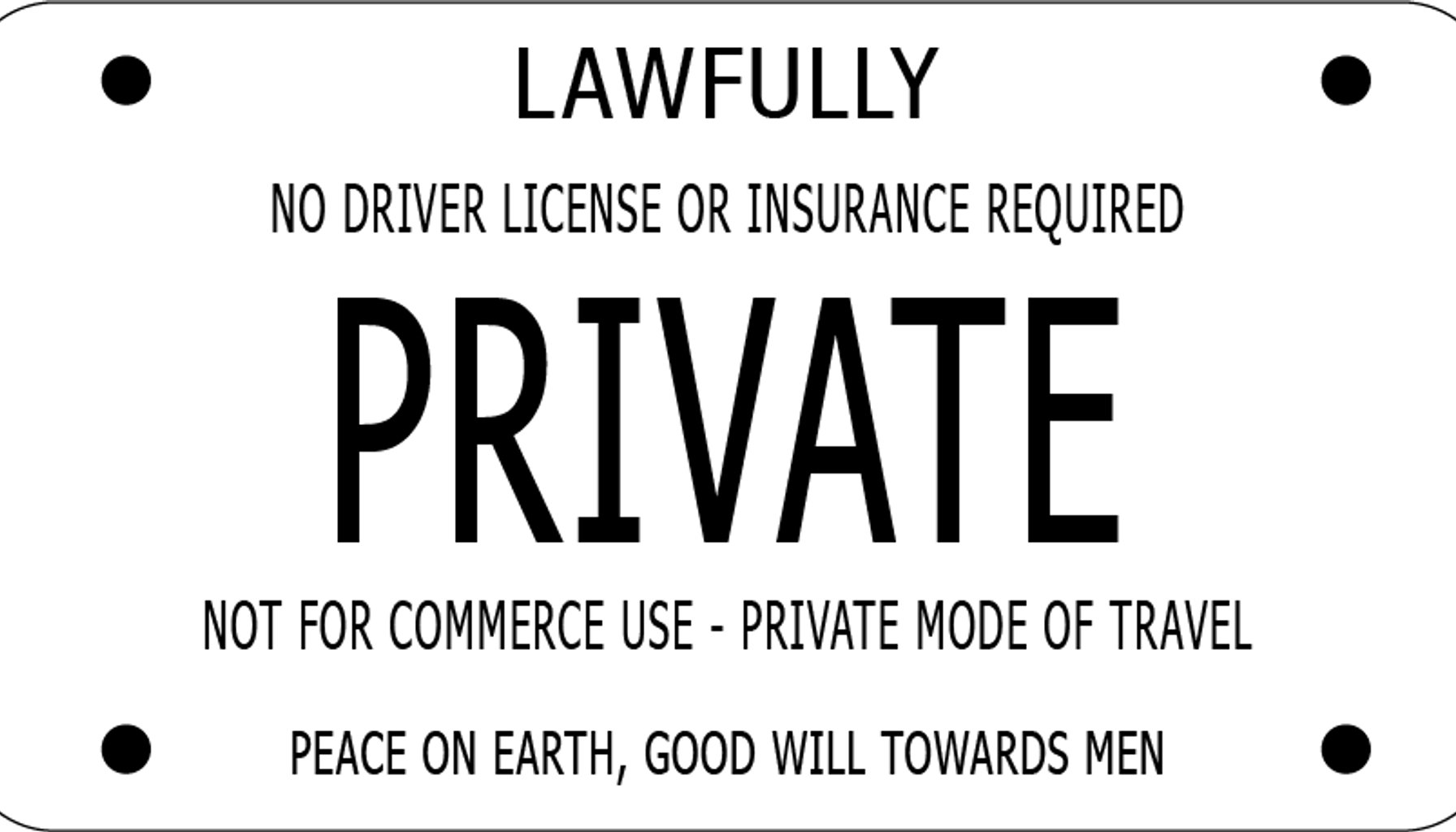

Image / Wikipedia - Sovereign citizen movement

America’s push for “sovereign AI” abroad just hit a new inflection point, as policymakers and researchers contest whether participation in U.S.-led AI ecosystems comes with enough assurances to quell partner uncertainty.

A recent Lawfare op-ed by Pablo Chavez of the Center for Security and Emerging Technology spotlights what he calls a “sovereignty gap” in U.S. AI statecraft. The gist: Washington is offering allies deployment control through American AI technology, even as many countries seek greater autonomy to limit reliance on U.S. systems and policy discretion. The decisive question, Chavez writes, is whether participation can be paired with an assurance layer that reduces uncertainty.

In practical terms, the United States is trying to persuade foreign governments and industry actors to adopt or co-develop AI deployments that are still tethered to American standards, technology stacks, and governance models. The risk, Chavez and others warn, is that countries may interpret “sovereignty” as a path to de-risk from U.S. influence, building parallel, domestically governed ecosystems that operate outside American policy levers. If that happens, the United States risks two outcomes: slower joint progress on global AI standards and a more fragmented landscape of interoperable systems.

One core tension is governance versus capability. The U.S. wants influence over how AI is deployed in sensitive areas—defense, critical infrastructure, security. Yet many U.S. allies insist on greater control over data, localization, and independent oversight. Chavez’ framing implies that without credible assurances—transparent rules, enforceable guarantees, and verifiable interoperability—the appeal of American AI assistance weakens. In short, sovereign AI isn’t just a technology problem; it’s a trust and governance problem.

For policy makers and industry insiders, the piece points to several practical dynamics shaping urgent decisions. First, a credible assurance layer would likely require binding rights and obligations—audits, independent vetting, and enforceable interoperability commitments—so partners can trust that integrating U.S.-provided AI won’t lock them into an opaque or unstable dependency. Without that, Chavez suggests, sovereignty claims may win the political battle while practical adoption stalls because uncertainty remains about control, data handling, and long-term viability.

Second, the tradeoff between leverage and autonomy will intensify. The United States believes it can offer scale, security, and advanced tooling; partners want the option to pivot if political or commercial terms shift. Industry players warn that policy ambiguity could force a multi-vendor, multi-standards reality, increasing complexity, costs, and risk of fragmentation that undermines global competitiveness.

Finally, observers should watch for concrete signs of how this theory translates into practice. Look for bilateral or regional accords that attach governance requirements to AI deployments, new interoperability standards, or procurement criteria that explicitly favor or require transparent assurance mechanisms. Absence of such signals could push partners toward purely domestic or non-U.S.–centric AI ecosystems, multiply bargaining chips in future negotiations, and amplify fragmentation in global AI governance.

From a practitioner perspective, two to four concrete takeaways stand out. One, an assurance framework needs teeth: binding commitments, independent audits, clear data-use boundaries, and dispute resolution processes. Two, interoperability—not just compatibility with U.S. tech—matters; without it, even well-intentioned partnerships may become brittle as national standards diverge. Three, procurement signals will be telling: if governments begin favoring tools with explicit sovereignty protections, the U.S. will need to adapt its offerings to avoid being sidelined. Four, policy continuity matters: shifting rules or opaque governance decisions can erode trust faster than the most advanced algorithms ever could.

If the sovereignty debate remains unresolved, the risk is a two-speed AI world: robust, U.S.-aligned deployments in some markets, and independently governed, locally controlled ecosystems in others. In that scenario, the promise of global AI collaboration could give way to strategic fragmentation—precisely the outcome Chavez cautions against.

Sources

Newsletter

The Robotics Briefing

Weekly intelligence on automation, regulation, and investment trends - crafted for operators, researchers, and policy leaders.

No spam. Unsubscribe anytime. Read our privacy policy for details.