AI Compute Surges on Hardware Breakthroughs

By Alexander Cole

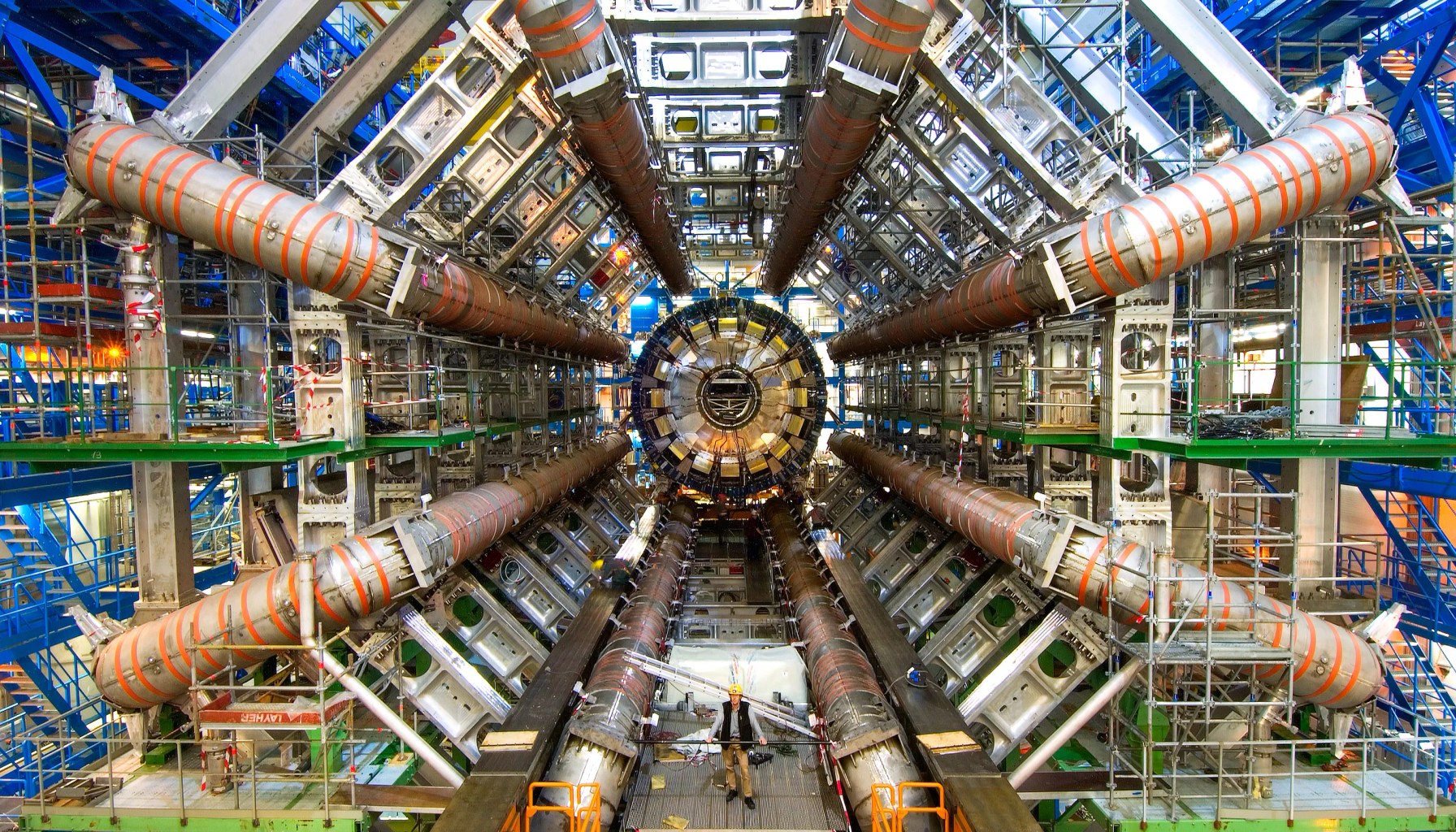

Image / technologyreview.com

Skeptics predicted AI compute would hit a wall—they’re wrong.

The Download’s latest briefing centers on a simple but powerful thesis: exponential AI progress isn’t a mirage, it’s being propped up by concrete hardware advances that keep feeding bigger, faster models. Mustafa Suleyman, Microsoft AI chief and Google DeepMind co-founder, argues the bottleneck narrative is outdated. Three advances, he says, are pushing progress beyond any supposed limit: faster basic calculators (processors getting cheaper and quicker at handling core tasks), high-bandwidth memory that moves data to and from chips at blistering speeds, and technologies that stitch together disparate GPUs into one enormous, seamless accelerator cloud. In short, the AI race isn’t sprinting on one leg; it’s leaping on a high-speed triple-stitched fiber.

What does that really mean for engineers shipping models this quarter? The paper (and Suleyman’s op-ed) frame compute as the enduring hinge of progress. If you can efficiently marshal thousands of GPUs as a single, coherent system, you unlock training regimes and model sizes that were previously infeasible outside a few trillion-dollar labs. The key takeaway for practitioners is not a magic unlock, but a reminder: the bottleneck keeps shifting—from raw clock speed to memory bandwidth to interconnect efficiency. Each shift requires different optimization bets, from data pipelines to model parallelism, from memory hierarchies to software stack maturity.

For AI teams, this translates into concrete, 2026-ready implications. First, cost and energy efficiency remain non-negotiable. Even with “unlimited” compute promises, the bill for training giant models is real, and the fastest path to product velocity is often inference optimization, not pure training scale. Second, memory bandwidth and interconnects aren’t nice-to-haves; they’re gating factors. Efficiently feeding GPUs with data, avoiding bottlenecks in PCIe/Interconnects, and choosing architectures that minimize data movement can yield outsized gains without hitting supply-chain chokepoints on accelerators themselves. Third, software maturity matters as much as hardware. The promise of turning many GPUs into a single supercomputer hinges on robust, scalable distributed training frameworks and reliable fault tolerance.

Here are practitioner-level takeaways to watch this quarter:

From a product standpoint, this isn’t a blockbuster “one trick” moment. It’s a reminder that the edge for shipping this quarter is in smarter use of existing hardware, smarter model deployment, and tighter integration across data, compute, and software. If you’re deciding where to allocate budget today, push on memory bandwidth improvements, economics of scale in your training and inference stack, and a plan for efficient, end-to-end optimization rather than chasing ever-larger parameter counts.

The broader industry takeaway remains stark: the narrative of a compute cliff is giving way to a narrative of scalable hardware-software ecosystems enabling bigger—and more practical—AI deployments. That’s the story Suleyman and the op-ed push: exponential growth isn’t accidental; it’s engineered by smarter hardware and smarter pipelines, together.

Sources

Newsletter

The Robotics Briefing

Weekly intelligence on automation, regulation, and investment trends - crafted for operators, researchers, and policy leaders.

No spam. Unsubscribe anytime. Read our privacy policy for details.