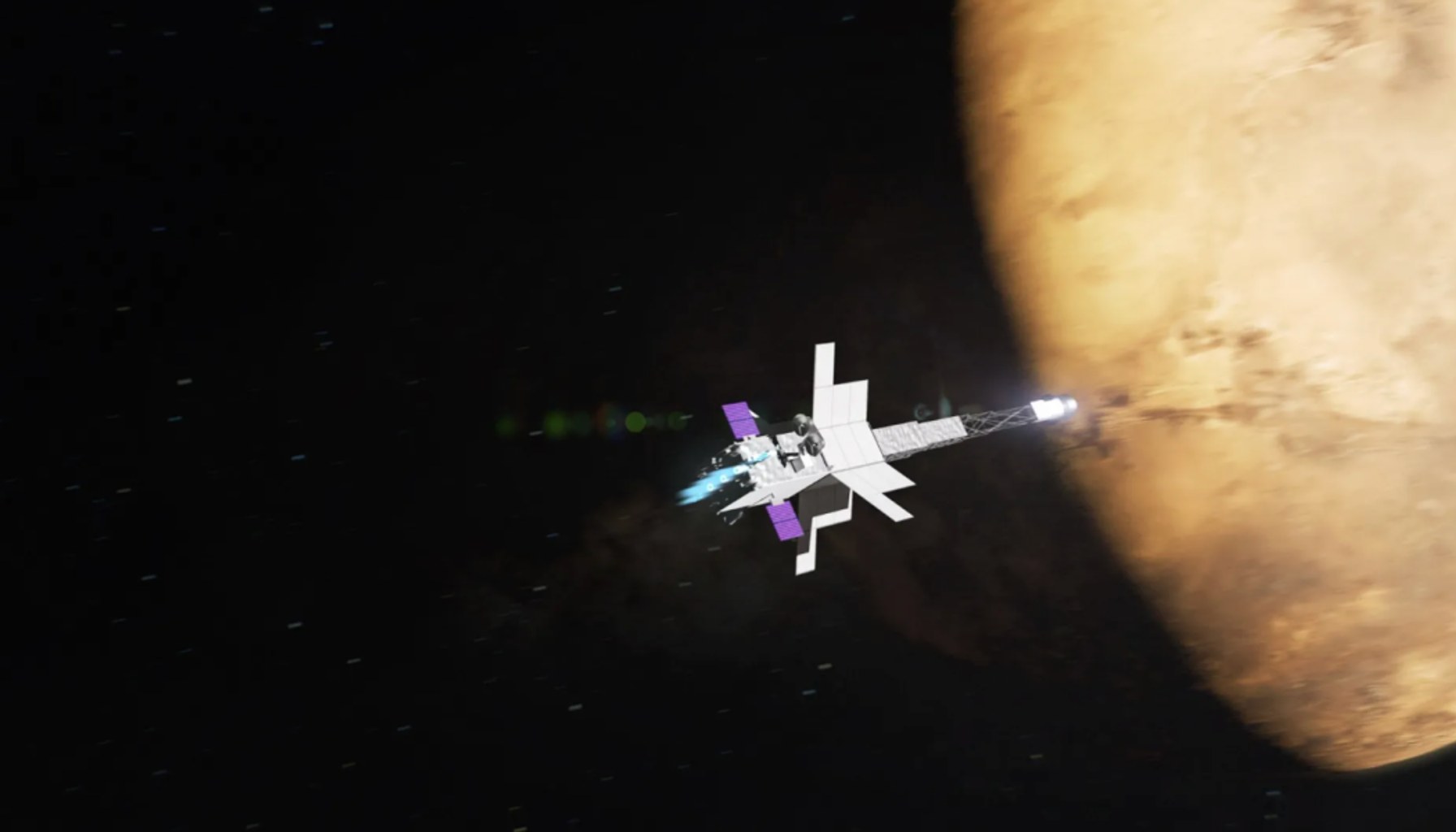

Nuclear AI Power Aims for Mars 2028

By Alexander Cole

Image / technologyreview.com

NASA plans to fly a nuclear reactor-powered spacecraft to Mars by 2028, a bold move that folds autonomous AI into the propulsion and navigation stack with enough energy to run it all the way to the red planet without relying on Earth-bound compute.

The NASA reveal comes as MIT Technology Review’s The Download highlights a broader moment: AI must operate reliably in settings where power, heat, and distance grind latency to a crawl. The plan isn’t just about science fiction hardware; it’s about a practical shift in how we think about AI compute: not in a climate-controlled data center, but in a moving, radiation-soaked environment where every watt and every bit matters.

The core implication, the article notes, is energy density. A reactor-powered spacecraft could sustain substantial onboard AI workloads for years, far beyond what solar arrays or RTG-based systems typically support. In practical terms, that means more sophisticated perception, planning, and fault-dolerance algorithms can run locally—reducing the need to shuttle data back to Earth or to earthbound supercomputers for every decision. For space missions, that translates into faster autonomous hazard avoidance, more robust navigation in uncertain gravity wells, and real-time science stitching from onboard sensors.

The paper demonstrates a future where AI inference lives in a tightly bounded power envelope, with compute decisions constrained by radiation hardening, shielding mass, and thermal limits. But the path is not simple. The article underscores that the project remains shrouded in mystery in places, with experts outlining the many technical and regulatory questions that must be solved before a reactor-powered Mars mission becomes routine.

From an AI/edge compute perspective, the move is a useful bellwether for the broader industry. Think of it like this: you’re shipping a data center, but the data center is strapped to a rocket, and the data center has to endure cosmic radiation, limited cooling, and a long, unforgiving trip to Mars. The analogy helps illuminate why this matters beyond space: it spotlights the design constraints that many edge AI teams already face—tight energy budgets, need for fault tolerance, and the imperative to run useful models offline.

Two practitioner takeaways stand out. First, the energy budget and reliability constraints will push AI hardware toward deeper energy efficiency and radiation-tolerant design. For products shipping this quarter, that translates into prioritizing energy-aware inference, quantization, and model architectures that remain robust under bit-flips and single-event upsets. In practice, teams should stress-test models for offline, continuous operation under degraded hardware, and favor lightweight, certifiably reliable inference paths over flashy, cloud-reliant capabilities. Second, the Mars mission reinforces the value of onboard autonomy that can function with intermittent connectivity. In the real world, that means designing AI systems with strong offline safety, fallbacks, and self-diagnostic capabilities—precisely the kinds of resilience concerns that plenty of edge deployments still struggle with.

Limitations are obvious. Nuclear propulsion introduces mass penalties, regulatory hurdles, and a long development cadence. The radiation environment—while manageable with hardened hardware and shielding—produces reliability risks that require conservative design, extensive testing, and robust fault-tolerance. In short: the ambition is extraordinary, but turning it into routine capability requires decades of incremental, risk-aware engineering.

What this means for products shipping this quarter is subtle but meaningful. Investors and teams should watch for a renewed emphasis on edge AI that is not only efficient but resilient to adverse operating conditions. Expect greater attention to fault tolerance, self-diagnosis, and autonomy that can operate with sparse or lossy communication. For startups racing to ship practical AI at the edge, the Mars program serves as a high-signal reminder: the future of AI in extreme settings will reward engineers who bake reliability into the core compute path, not as an afterthought.

In the end, NASA’s plan crystallizes a simple truth: if you want AI that can operate when the lights are out on Earth and the heat is on under a rover, you design for scarcity, not abundance. The data center may still live in a data center, but the next frontier is a data center that can endure a four-billion-mile journey.

Sources

Newsletter

The Robotics Briefing

Weekly intelligence on automation, regulation, and investment trends - crafted for operators, researchers, and policy leaders.

No spam. Unsubscribe anytime. Read our privacy policy for details.