AI Agents Now Run Software Projects End-to-End

By Alexander Cole

Image / technologyreview.com

AI agents are poised to run entire software projects, not just code.

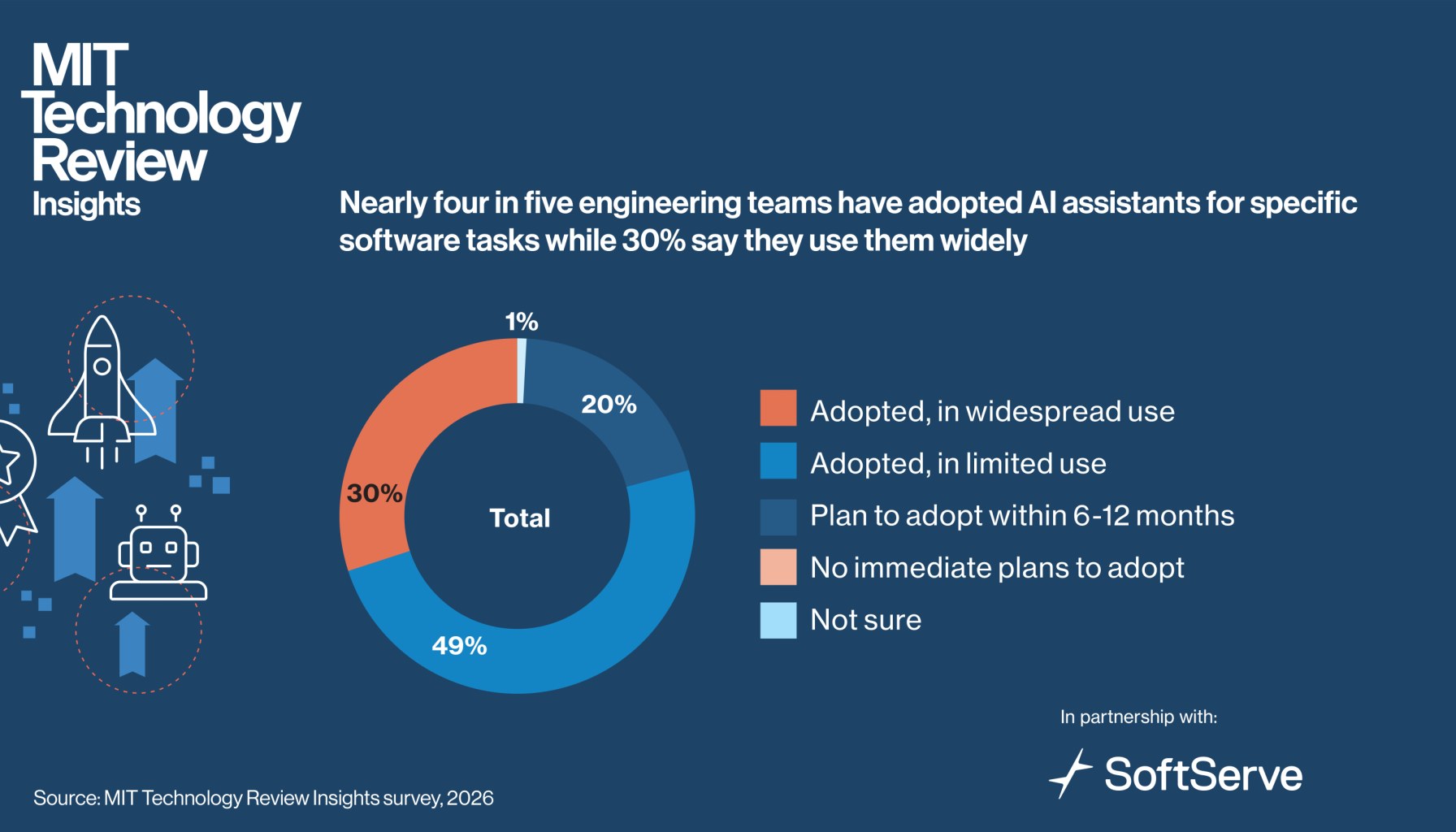

A Technology Review feature argues that software engineering is on the cusp of a third seismic shift: after open source unlocked global access and DevOps made delivery continuous, autonomous AI agents could soon plan, coordinate, and execute software work across teams. The claim isn’t a demo stunt—it’s a vision backed by a survey of 300 engineering and technology executives who say agentic AI has serious potential to automate end-to-end software processes, not merely assist with individual tasks. The catch: adoption remains selective and incremental, with real barriers to diffusion that teams must methodically solve before this becomes standard practice.

The core idea is simple in words, thorny in practice: agents that can reason, self-direct, and manage dependencies across a project. They could thread together coding, testing, deployment, and governance into a single autonomous loop, letting engineers focus on higher-level problems or product strategy. Yet the report stresses that most teams are still experimenting in narrow pockets. Ambition runs high, but the path to broad, enterprise-wide deployment is slow, requiring organizational changes, guardrails, and new performance metrics beyond lines of code delivered.

For practitioners, two big implications stand out immediately. First, governance and coordination matter as much as capability. Autonomous agents don’t operate in a vacuum; their decisions must align with product goals, compliance constraints, and security policies. That means teams will need new workflows to monitor agent decisions, establish accountability, and intervene when autonomy drifts or fails to satisfy stakeholders. Second, data quality and toolchain readiness determine success. Agentic work hinges on reliable data pipelines, access to the right APIs, and consistent instrumenting of feedback loops. If data is siloed or tooling is brittle, the agent’s autonomy buys speed at the cost of reliability—an outcome many teams cannot risk in regulated or customer-critical domains.

From an industry vantage point, the appeal is tangible but the tradeoffs are real. The report’s framing suggests a future where “end-to-end software process automation” is not a moonshot but a gradual layering of autonomy on top of existing engineering practices. The payoff is a more continuous, resilient development lifecycle, where agents handle routine orchestration and engineers tackle creative strategy and complex design. But that transition invites questions about cost, latency, and failure modes. If agents misinterpret a product objective or chase suboptimal tradeoffs, the velocity gain could be offset by debugging and governance overhead.

Analogy helps: imagine a seasoned conductor who can read every instrument’s part, adjust tempo on the fly, and re-balance sections as the symphony unfolds. The conductor’s baton is AI, and the orchestra is your entire software project. The advantage is stunning—if the conductor stays aligned with the score and the orchestra trusts the cues. The risk is miscommunication or over-reliance on automation in moments demanding human judgment.

What this means for products shipping this quarter is to expect pilots that test agentic AI in constrained domains—CI/CD orchestration, incident response playbooks, or modular feature rollouts—rather than wholesale ownership of a product line. Early adopters will measure success not by a single metric but by cycle time, reduced cognitive load on engineers, and improved governance traceability. Expect new guardrails, safer autonomy patterns, and clear human-in-the-loop checkpoints as preconditions for broader adoption.

Limitations or failure modes are worth naming up front: brittle autonomy when goals are poorly specified; cascading errors if the agent relies on imperfect data; and governance gaps that let misaligned updates slip through. The report highlights that diffusion will require time and deliberate effort to lower the barriers—cultural, technical, and regulatory. In other words, this is not “set it and forget it” AI; it’s a shift toward agent-directed discipline integrated with human oversight.

In sum, agentic AI is moving from a promising concept to a practical, albeit cautious, pathway for software engineering. The next few quarters will reveal how teams balance autonomy with accountability, speed with reliability, and ambition with discipline.

Sources

Newsletter

The Robotics Briefing

Weekly intelligence on automation, regulation, and investment trends - crafted for operators, researchers, and policy leaders.

No spam. Unsubscribe anytime. Read our privacy policy for details.