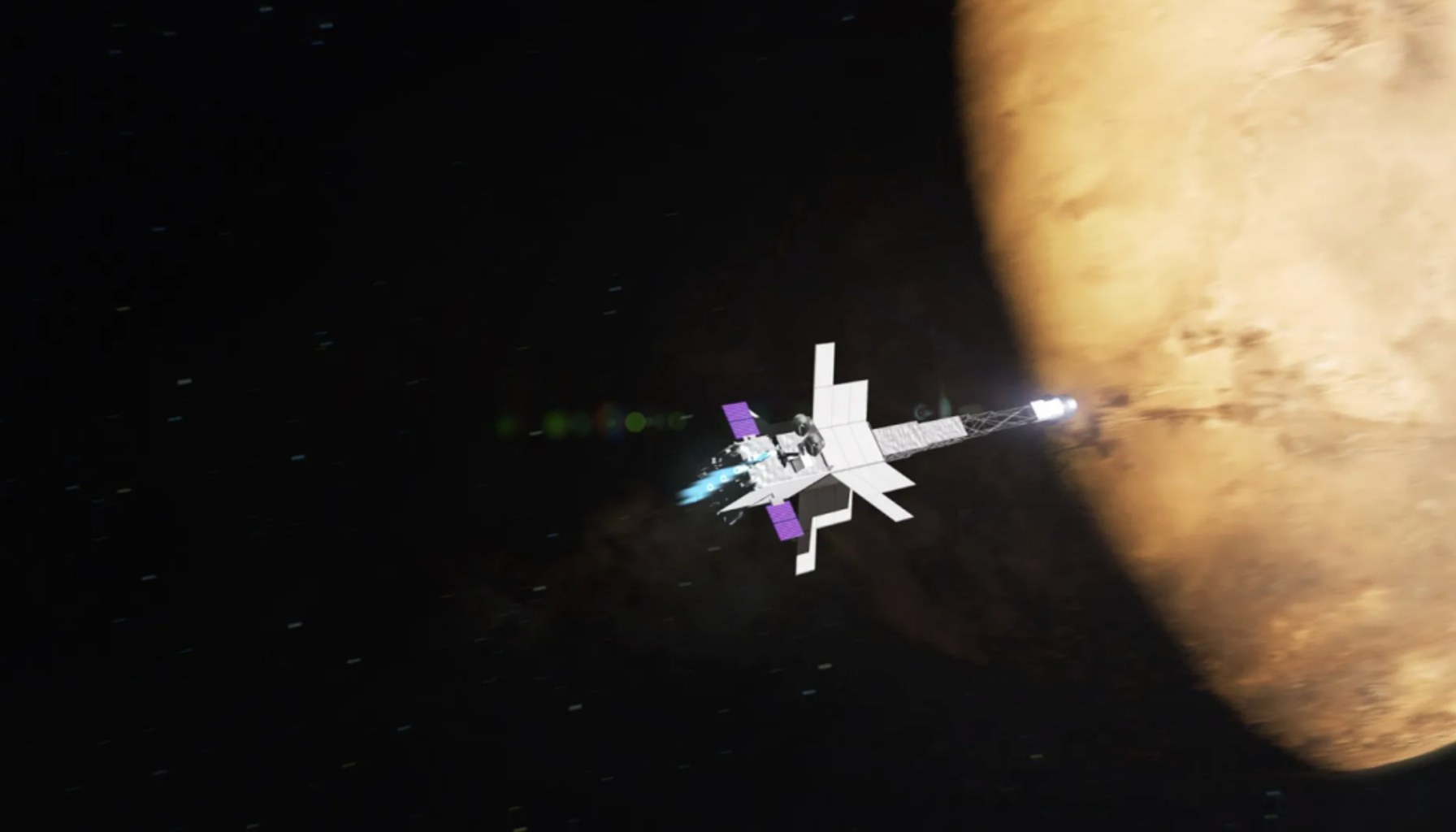

Nuclear Mars Mission Set for 2028

By Alexander Cole

Image / technologyreview.com

NASA plans to launch a nuclear reactor-powered spacecraft to Mars by 2028, a move that would mark the first interplanetary mission powered by a reactor and redefine long-duration spaceflight.

The plan, disclosed as Artemis II heads off on a lunar slingshot, signals a broader ambition: use a compact nuclear core to sustain propulsion, life support, and onboard computation for months or years of cruise and deep-space operations without frequent Earth-based refueling. MIT Technology Review notes the project is still shrouded in mystery, with experts asked to unpack how such a system could work and what it would require to keep humans and robots safe on a voyage that could outlive a typical rover mission. The timing—targeting Mars by the end of 2028—puts the United States on a fast track to a capability that could outpace rival space programs and reshape the economics of planetary exploration.

One key wrinkle is the balance of power, heat, and reliability. A reactor-powered platform promises more onboard energy than solar or traditional chemical propulsion, enabling deeper science packets, more capable sensors, and robust autonomous systems. But it also intensifies the engineering burden: shielding sensitive electronics from radiation, cooling high-power hardware in a vacuum, and ensuring fail-safe operation across the mission’s multi-year lifecycle. In practice, mission design will hinge on how to pair a compact reactor with a dependable power management system, a resilient propulsion architecture, and a fault-tolerant autonomy stack that can operate with limited ground intervention.

From an AI and autonomy standpoint, the implications are material. Long-duration missions demand smarter onboard decision-making, anomaly detection, and navigation without constant ground oversight. Yet AI in space is a high-wire act: radiation, limited updates, and latency all constrain how models are developed, tested, and deployed. The technical challenge isn’t just “more compute”—it’s the reliability of that compute under radiation, the ability to recover from errors without cascading failures, and the discipline to keep models aligned with mission goals as conditions evolve millions of miles from Earth. The result could be a more autonomous spacecraft that can keep a science payload on course, reallocate power to instruments as science priorities shift, and diagnose systems without waiting for a relay from mission control.

Practitioner insights stand out. First, safety and regulatory gating will be a front-and-center constraint; a nuclear system in deep space introduces stringent risk management and verification regimes that extend far beyond typical aerospace development timelines. Second, compute and thermal budgets will drive architectural choices: higher power draws enable richer AI stacks, but demand advanced radiation-hardened hardware and aggressive cooling strategies in an environment where every watt counts. Third, data and software lifecycle management will be slower and more careful than in Earth-based AI deployments: software updates, model retraining, and fault-injection tests must be conducted with extreme caution to avoid mission-critical failures far from home. Fourth, the mission’s success (or failure) will likely ripple into commercial space: expect increased emphasis on radiation-tolerant AI accelerators, robust fault-tolerant software, and more transparent safety cases for autonomous systems used in satellites and deep-space probes.

Analogy helps: it’s like equipping a marathon runner with a nuclear-powered jetpack—endurance and reach become possible, but the runner must run in a suit built to survive storms, keep the engine cool, and never lose sight of the checkpoint that is Earth.

For products shipping this quarter, the story suggests a clear trend to watch: a ramp in investments around autonomy-grade compute hardware and error-resilient AI stacks designed for extreme environments. Startups and incumbents alike may push more radiation-hardened processors, redundancy-forward architectures, and simulation-heavy verification pipelines to de-risk future missions. It’s not about a consumer-like upgrade; it’s about building the reliability chassis that makes truly autonomous, long-horizon spaceflight viable.

Sources

Newsletter

The Robotics Briefing

Weekly intelligence on automation, regulation, and investment trends - crafted for operators, researchers, and policy leaders.

No spam. Unsubscribe anytime. Read our privacy policy for details.