Perception Driven Robots Reshape Industry

By Maxine Shaw

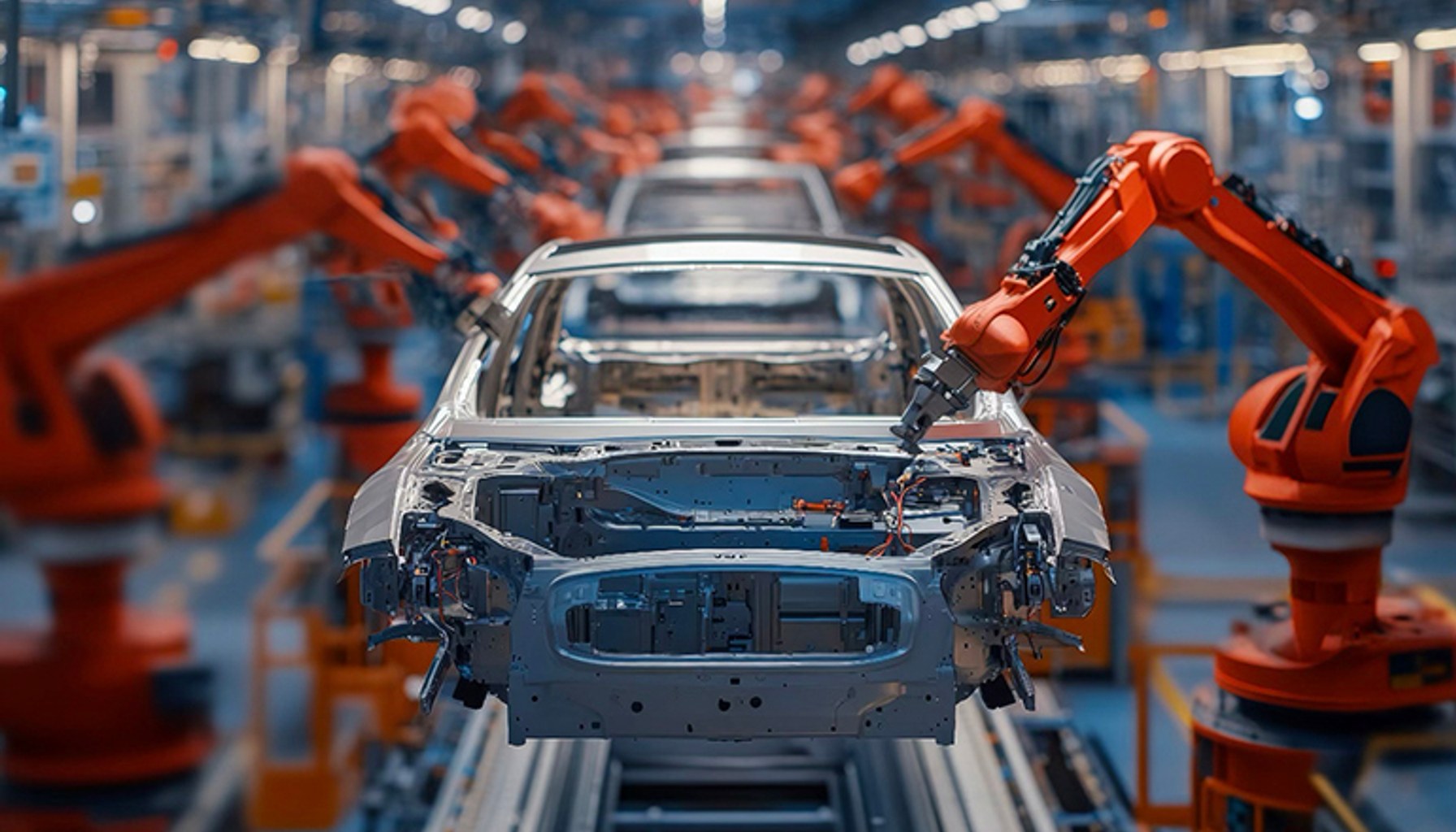

Image / therobotreport.com

Perception is infrastructure now, not a feature. Robots across cars, warehouses, and hospitals are being built to see, hear, and respond in real time, turning sensing from a specialty into the backbone of how they operate. They must see, interpret, and act safely in dynamic environments, a shift that changes everything from deployment timelines to maintenance needs. The Convergence in Perception Systems from Cars to Robots

That shift is not about adding one sensor and calling it a day. It signals a move toward mobility systems that are perception driven, compute intensive, and safety critical. The real challenge, as industry observers note, is system level: ensuring sensing, connectivity, compute, power, and safety operate together reliably under real world conditions. In other words, perception stops being a feature and starts acting as the infrastructure that governs how robots move, decide, and interact. The Convergence in Perception Systems from Cars to Robots

Autonomous mobile robots are no longer confined to fenced cells or experimental tests. They now operate continuously in warehouses and hospitals, navigating shared spaces and unstructured behavior in real time. Drones are flying longer distances with greater autonomy, and humanoid systems are beginning to work in proximity to people, which amplifies the need for synchronized sensing modalities, robust edge compute, and safe, predictable control loops. This convergence is reshaping what a deployment plan even looks like for industrial settings. The Convergence in Perception Systems from Cars to Robots

Industry leaders are starting to treat sensing as the connective tissue of an entire robotic ecosystem. The idea that perception is a standalone capability is giving way to the view that perception, compute, and safety are an integrated ongoing workflow. Automotive thinking about distributed nervous systems, where a network of sensors, processors, and controls behave as a single, predictable system, has begun translating into robotics, drones, and humanoids. The result is a push toward closer alignment of risk, reliability, and real world performance across platforms and use cases. The Convergence in Perception Systems from Cars to Robots

From practitioners, several watchouts emerge. For one, the integration effort becomes the project, not the afterthought, because the real work lies in making sensing, connectivity, compute, power, and safety sing in unison. Floor supervisors report that perception becomes a shared responsibility among hardware vendors, software developers, and operations teams, with the payoff being fewer unplanned outages and steadier throughput. Operational metrics show that even incremental gains in perception fidelity can translate into smoother workflows, lower rework, and tighter cycle times, but only if the system is designed to handle fusion latency, calibration drift, and network bottlenecks. The Convergence in Perception Systems from Cars to Robots

Two concrete practitioner insights stand out. First, you must plan for a distributed nervous system rather than a single module upgrade. That means budgets, timelines, and training now have to cover sensors, edge processing, communications, and safety interlocks as a cohesive package. Second, safety and reliability become ongoing operational metrics, not one time checkpoints. When perception becomes infrastructure, a small failure in fusion or latency can ripple across the entire workflow, so robust testing, redundancy, and clear responsibility across teams are non negotiable. These are the boundaries that separate a successful pilot from a deployment with measurable payback. The Convergence in Perception Systems from Cars to Robots

As the industry leans into this convergence, the line between automotive sensing strategies and industrial automation softens. The same principles that gave cars their distributed nervous systems, including multimodal sensing, tight sensor fusion, and edge compute with safety layers, are informing how warehouses, hospitals, and field operations will run in the next decade. Perception has become the backbone of practical automation, changing not only what is possible but how organizations plan, fund, and maintain their robotic assets. The Convergence in Perception Systems from Cars to Robots

- The Convergence in Perception Systems from Cars to Robotstherobotreport.com / Trade / Published MAY 13, 2026 / Accessed MAY 14, 2026

Newsletter

The Robotics Briefing

A daily front-page digest delivered around noon Central Time, with the strongest headlines linked straight into the full stories.

No spam. Unsubscribe anytime. Read our privacy policy for details.