AI Compute Surges; Wall Slips

By Alexander Cole

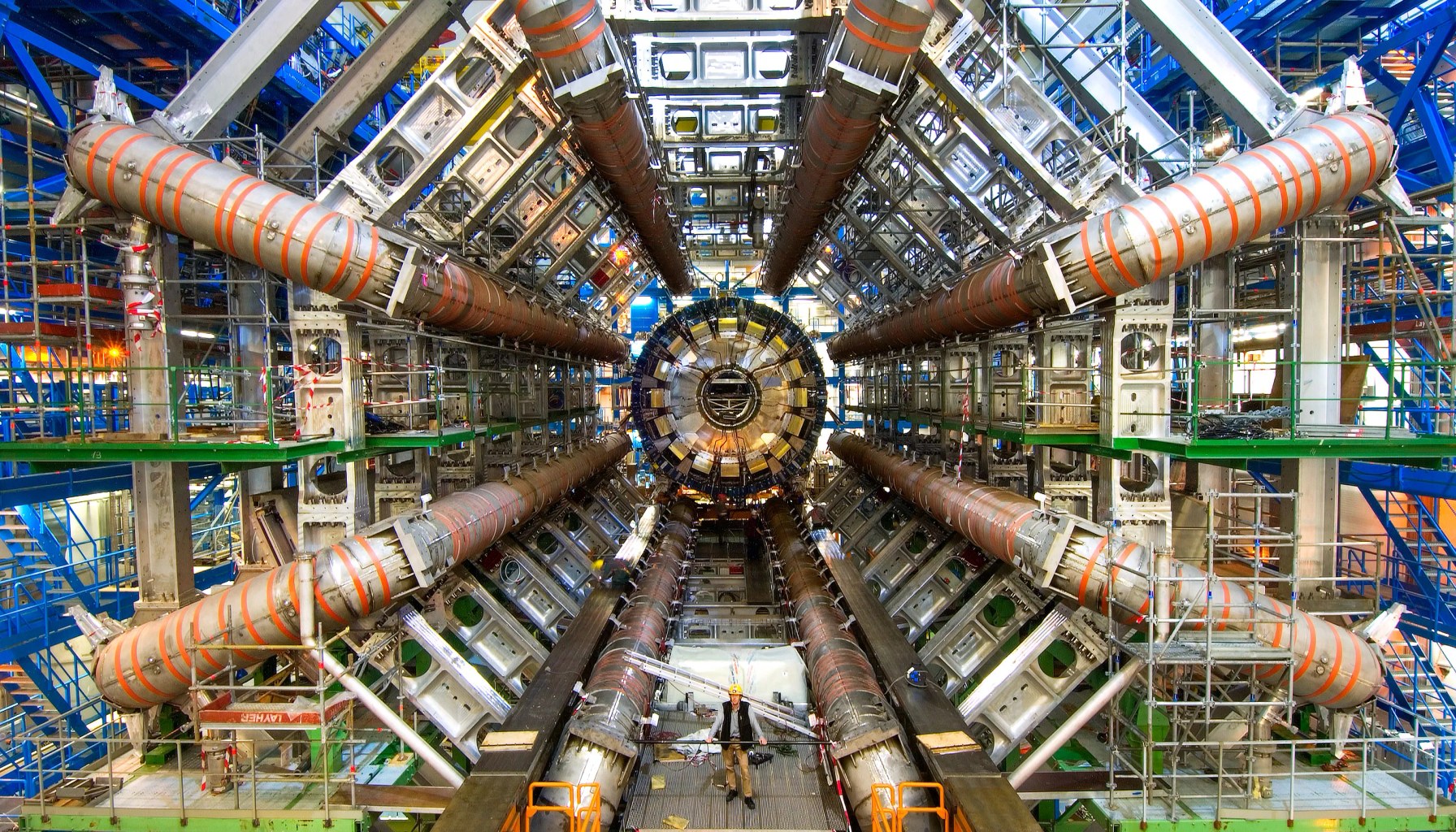

Image / technologyreview.com

AI compute growth is exploding, and the wall critics warned about may never arrive.

The Download, a Technology Review newsletter, frames the latest optimism around AI progress around a simple argument: the drivers of computation aren’t slowing down. Mustafa Suleyman, described in the piece as Microsoft AI CEO and Google DeepMind cofounder, argues that AI development will not hit a hard bottleneck “anytime soon.” The reason, the article suggests, is not just bigger models, but smarter hardware infrastructure. Three forces are converging to push the trajectory upward: faster basic calculators (cores and accelerators improving at rate), high-bandwidth memory feeding ever-larger data streams, and software-enabled orchestration that turns a hodgepodge of GPUs into a coordinated, nearly one‑supercomputer cluster.

In plain terms, the three advances act like upgrades in a factory line. First, faster processors and accelerators cut the wall clock for training and fine-tuning, letting researchers iterate more quickly. Second, high-bandwidth memory reduces the cost of moving data between compute units—crucial when models grow to tens or hundreds of billions of parameters and when you’re juggling activations, gradients, and large datasets. Third, orchestration technologies—software that can coordinate disparate GPUs across racks and data centers—translate a collection of ordinary GPUs into a massively capable compute engine. The combined effect, the piece implies, is not just bigger models, but smarter, better-utilized hardware at scale.

The premise resonates with a broader industry trend: compute efficiency and system design are becoming as important as raw math prowess. It’s not just about squeezing more FLOPs; it’s about reducing data shuffles, improving memory locality, and making distributed training behave like a single, well-tuned machine. The article positions Suleyman’s view as a counterpoint to worries that AI progress will stall once the initial appetite for compute outpaces supply. If the three accelerants hold, those fears may be premature.

For practitioners in the trenches, a few concrete takeaways emerge. First, memory bandwidth matters as models and activations balloon; teams should weigh investments in memory-rich architectures and memory-centric data paths, not just larger GPUs. Second, software orchestration and interconnects matter as much as chip speed; expect to rely on advanced distributed training frameworks, better synchronization strategies, and pipeline or tensor-parallelism to keep GPUs productive. Third, the payoff isn’t only during training—the same hardware trends can shorten inference latencies if deployed thoughtfully, but that requires careful data flow and model optimization. Fourth, all of this brings energy and cost considerations to the fore: bigger clusters demand smarter cooling, power planning, and vendor coordination, or you’ll pay a premium for scale without meeting product timelines.

All this hints at what to expect this quarter. If you’re shipping consumer or enterprise AI features, the message is to lean into compute-aware product design: prioritize model optimizations (mixed precision, sparsity, and possibly mixture-of-experts approaches), and design data pipelines that avoid feeding your accelerators bottlenecks. Be prepared for vendors to push higher-bandwidth memory and faster interconnect options, and build your ML ops around robust distributed training practices so you can capitalize on the new hardware without blowing up costs or timelines.

The broader industry takeaway: the “wall” argument isn’t dead, but the countervailing forces—faster hardware, richer memory, smarter software—are reshaping what “scale” means and how quickly teams can move from prototype to production.

Sources

Newsletter

The Robotics Briefing

Weekly intelligence on automation, regulation, and investment trends - crafted for operators, researchers, and policy leaders.

No spam. Unsubscribe anytime. Read our privacy policy for details.