AI Growth Surges: Three Engines Power the Explosion

By Alexander Cole

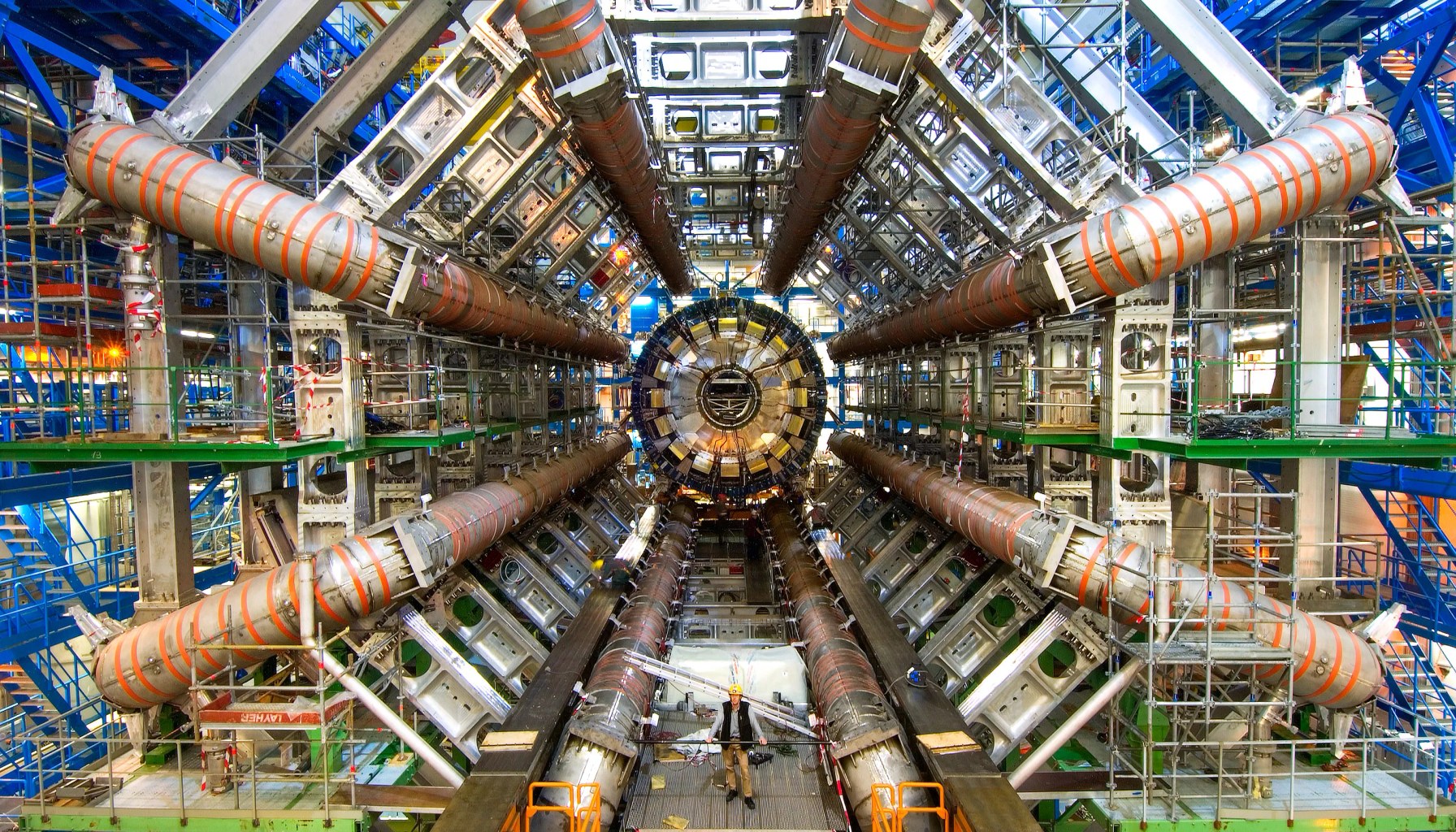

Image / technologyreview.com

AI compute keeps exploding, and the skeptics keep losing.

Mustafa Suleyman’s take in The Download lands with a blunt bet: AI development won’t hit a wall anytime soon. The reason, the op-ed argues, isn’t a magic wand but three concrete enablers that have quietly redefined what “scale” means: faster basic calculators (that is, cheaper, quicker silicon), high-bandwidth memory that keeps data flowing at speed, and software-enabled technologies that turn a patchwork of GPUs into one enormous, coordinated supercomputer. It’s not just bigger models; it’s smarter use of the entire compute stack.

Put plainly, the trio is changing the calculus for teams trying to train, fine-tune, and deploy AI. Faster chips lower the per-step cost of training, but memory bandwidth is what keeps those steps from choking on data. When you combine the two with software that fuses thousands of GPUs into cohesive systems, you don’t just scale up—you scale out with real efficiency. The result, as the piece puts it, is exponential progress that keeps outpacing the skeptics’ predictions about a looming compute wall.

For practitioners, that’s both an invitation and a warning. The invitation is obvious: more room to experiment, iterate, and deploy larger, more capable models. The warning is subtler but crucial. Scale without discipline soon devolves into runaway costs, energy use, and maintenance complexity. In practice, teams that want to ride this wave must invest not just in GPUs, but in the entire stack: memory architectures that keep data hot, interconnects that minimize idle time, and orchestration that makes distributed training feel like a single machine.

A vivid analogy helps: think of this as turning a pile of Lego bricks into a city. The bricks are the faster calculators, the connectors are the high-bandwidth memory channels, and the blueprint is the software that coordinates thousands of bricks at once. You can build a bigger city only if all three pieces fit—brick quality, easy-to-connect rails, and scalable construction rules. Without all three, you end up with a sprawling, wobbly mess; with all three, you get a sprawling, stable metropolis that grows with demand.

Behind the optimism are practical realities. First, the economics of scale remain steep. Even with improved efficiency, training and fine-tuning large models demand substantial budgets, careful cost governance, and a plan for ongoing hardware and software upgrades. Second, the energy and cooling footprint of massive clusters is real—and not merely a passing concern for climate-focused investors. Third, data quality and alignment don’t scale away simply because hardware does; bigger models still crave cleaner data pipelines and robust safety checks, or risk amplifying biases and missteps.

What this means for products shipping this quarter is concrete. Expect more teams to lean on off-the-shelf foundation models and API services for rapid prototyping, paired with targeted fine-tuning and adapter-based approaches to tailor capabilities without revisiting full-scale training. In parallel, there’ll be increasing emphasis on efficiency: 8-bit or 4-bit quantization, smarter prompt design, and retrieval-augmented workflows to cut inference costs while preserving usefulness. For startups and product teams, the move is clear—invest in the whole stack: hardware-aware model design, scalable training pipelines, and cost-aware evaluation to avoid runaway budget traps.

The takeaway isn't hype; it's a logistical roadmap. Compute is no longer the bottleneck it once was, not because hardware is magic but because software and memory have finally caught up with it. The next wave will reward those who optimize the full chain—from silicon to system to surface-level product features.

Sources

Newsletter

The Robotics Briefing

Weekly intelligence on automation, regulation, and investment trends - crafted for operators, researchers, and policy leaders.

No spam. Unsubscribe anytime. Read our privacy policy for details.