RLWRLD Debuts RLDX-1 Dexterity Foundation

By Sophia Chen

Image / therobotreport.com

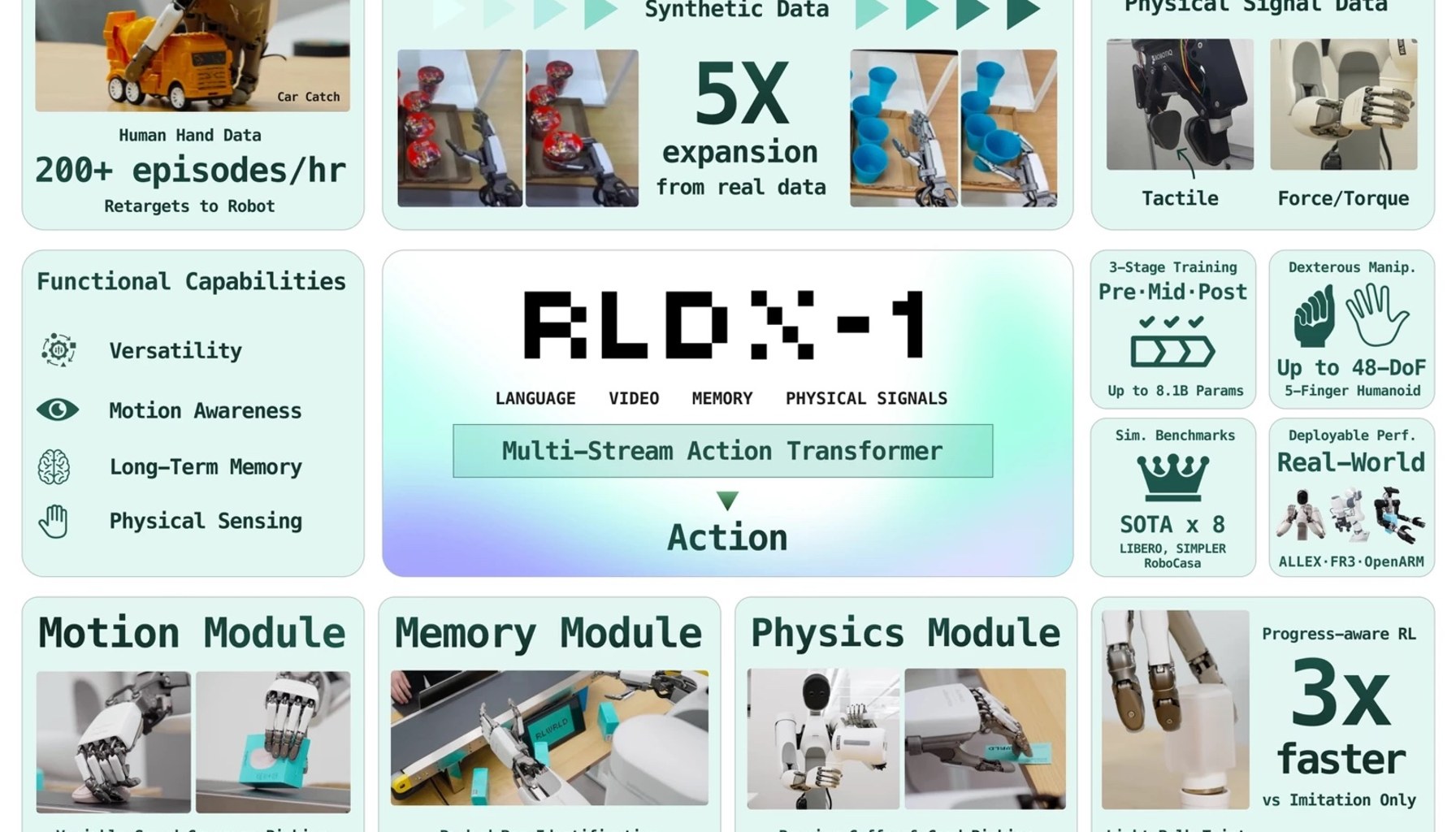

RLDX-1 promises robot hands that can see, feel, and adapt on the shop floor. RLWRLD last week unveiled a dexterity-first foundation model designed to power high-DoF hands across single-arm, dual-arm, and humanoid embodiments. RLWRLD releases RLDX-1 a dexterity-first foundation model for robot hands

The company frames real-world interaction as a trio: recognizing what to do, maintaining relevant state over time, and grounding decisions in physically meaningful signals. It has built RLDX-1 to cover the complete robotics lifecycle, from scalable data collection to deployment strategies, aiming for precision and generalization in both simulated environments and physical industrial tasks. RLWRLD releases RLDX-1 a dexterity-first foundation model for robot hands

RLWRLD distills a long list of customer needs into five regimes of dexterity, a framework embedded in DexBench that maps specific failure modes, from pouring a pot of coffee to picking moving objects or manipulating tiny fingertips tasks, into testable scenarios. The goal, publishers note, is to close the loop between vision, touch, memory, and action in real devices. RLWRLD releases RLDX-1 a dexterity-first foundation model for robot hands

The technical narrative emphasizes an end-to-end approach: a scalable data-collection pipeline, an architecture designed for dexterous manipulation, robust training regimens, and deployment strategies tuned for real tasks. RLWRLD claims the result is a single model that can “see, feel, remember, and adapt,” deployable across single-arm, dual-arm, and humanoid forms with high-DoF hands, and capable of cross-domain transfer between simulation and the factory floor. RLWRLD releases RLDX-1 a dexterity-first foundation model for robot hands

From a practitioner standpoint, the big draw is the claimed integration of perception, proprioception, and stateful decision making into a single model. Demonstration footage and benchmarks suggest RLDX-1 is being pitched as a unifying core for dexterous hands rather than a collection of task-specific controllers. The emphasis on grounded signals implies a move away from brittle, purely simulated policies toward behaviors that respond to real contact, force, and pose feedback. RLWRLD releases RLDX-1 a dexterity-first foundation model for robot hands

Despite the upbeat framing, several practical gaps remain. The release does not disclose exact DOF counts or payload capacities for the single-arm, dual-arm, or humanoid embodiments, nor does it publish power sources, runtime, or charging requirements. For buyers and integrators, that absence matters: without concrete torque/payload data and a clear power plan, it is hard to gauge how RLDX-1 will cope with heavy or dynamic tasks on site. The technical documentation shows high-DoF hands but leaves the actual numbers unspecified, and there is no public rundown of energy efficiency or continuous-operation capabilities. RLWRLD releases RLDX-1 a dexterity-first foundation model for robot hands

Industry observers will be watching whether RLDX-1 translates into field-ready dexterity or remains a lab milestone dressed up as a platform. The company frames the model as capable of deployment across multiple humanoid embodiments with high-DoF hands, but until torque specs, payload envelopes, and endurance figures surface in engineering documentation, the path from promising research to reliable, repeatable automation remains unproven on real lines. Demonstration footage shows potential, but the real test is how the model handles the industrial edge cases that routinely trip up dexterity-first systems. RLWRLD releases RLDX-1 a dexterity-first foundation model for robot hands

Practitioner insights to watch next include: the need for transparent DOF and payload disclosures to validate when a given humanoid form can actually lift or manipulate a target part; the importance of energy and runtime data to assess suitability for continuous operation on a factory line; and the value of independent benchmarks that stress memory, force sensing, and real-time grounding under noisy industrial conditions. If RLWRLD follows with detailed specs and independent validation, RLDX-1 could move from a compelling architecture to a verifiable production platform. RLWRLD releases RLDX-1 a dexterity-first foundation model for robot hands

- RLWRLD releases RLDX-1, a dexterity-first foundation model for robot handstherobotreport.com / Trade / Published MAY 11, 2026 / Accessed MAY 11, 2026

Newsletter

The Robotics Briefing

A daily front-page digest delivered around noon Central Time, with the strongest headlines linked straight into the full stories.

No spam. Unsubscribe anytime. Read our privacy policy for details.