What we’re watching next in ai-ml

By Alexander Cole

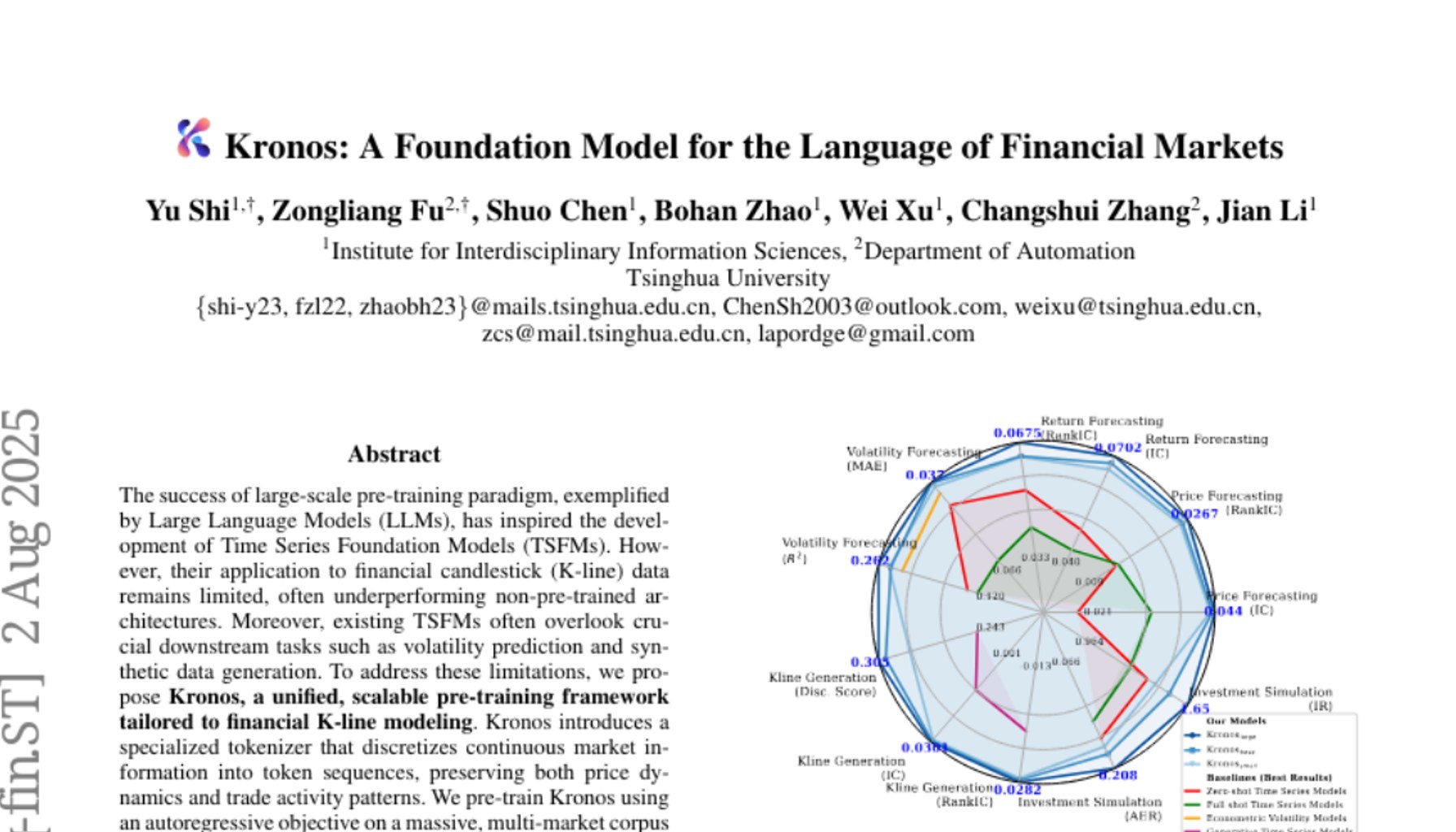

Image / paperswithcode.com

Smaller, cheaper, better—AI models are finally catching up.

The latest wave of AI research isn’t about bigger armies of GPUs; it’s about smarter efficiency. A flood of papers on arXiv and in Papers with Code, alongside new OpenAI research, points to a pivot: you can squeeze more performance out of fewer parameters with clever training, data, and architectural tricks. In plain terms, the industry is inching toward parity with larger models on many tasks, while dramatically lowering compute and memory demands. The practical upshot: faster iteration cycles, cheaper inference, and a path to democratized AI services that don’t force every startup into a multi-hundred-million-dollar capex hurdle.

But this is not a free lunch. The gains are highly task- and data-dependent, and the reliability and safety envelopes aren’t automatically better just because a model is smaller. Benchmark results show encouraging signs, but they’re uneven across domains. Some studies report strong instruction-following and generalization with sub-1B-parameter architectures when paired with smart distillation, data curation, or reinforcement learning from human feedback (RLHF). Others reveal that reductions in size often come with tradeoffs in robustness, calibration, or edge-case behavior. The technical report details vary widely by task and dataset, so practitioners should treat “smaller is better” as a directional trend, not a universal verdict.

For product teams, this matters now. If you can cut compute without sacrificing critical reliability, you unlock cheaper hosting, lower latency, and easier MLOps at scale. But the same trend raises questions about where to draw the line between model size, training cost, and user-observable quality. The open questions aren’t just scientific; they’re commercial: which tasks actually benefit from a smaller model, and where do you still need the safety rails and red-team testing that larger systems tend to demand?

Here’s the practical gist for engineers and leaders eyeing the next quarter:

What we’re watching next in ai-ml

Sources

Newsletter

The Robotics Briefing

Weekly intelligence on automation, regulation, and investment trends - crafted for operators, researchers, and policy leaders.

No spam. Unsubscribe anytime. Read our privacy policy for details.