AI Compute Defies the Wall, Growth Surges

By Alexander Cole

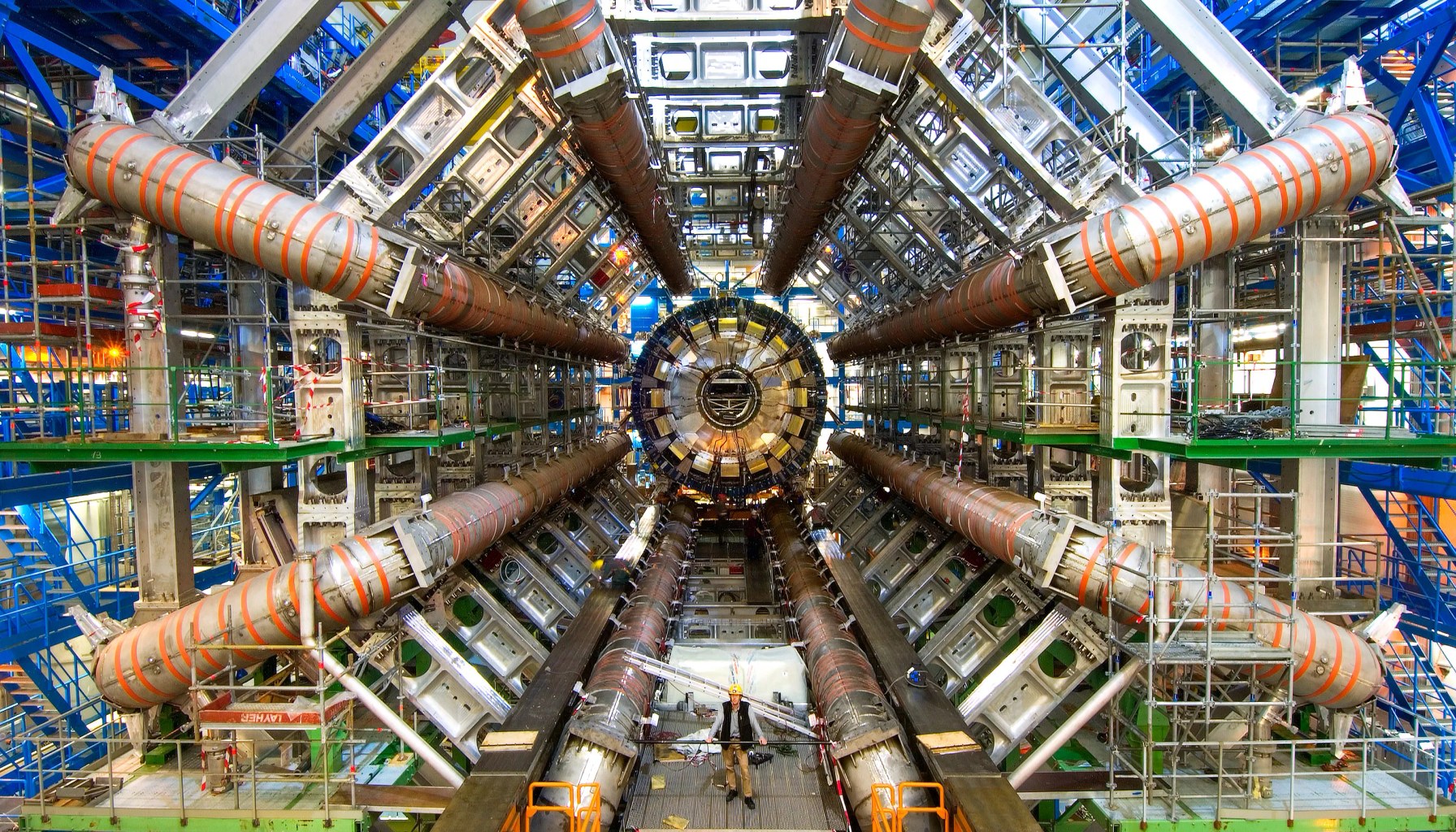

Image / technologyreview.com

AI compute just keeps doubling, and skeptics are running out of excuses.

The latest edition of The Download, the MIT Technology Review’s weekday briefing, frames a stubborn reality: claims that AI compute would soon plateau are not panning out. Mustafa Suleyman, a leading voice in AI development, argues that the growth spurt is still fueled by three concrete forces. First, faster basic calculators — the continuing churn of more capable CPUs and accelerators. Second, high-bandwidth memory that shuffles data into and out of chips at ever-greater speeds. And third, technologies that cohere large pools of GPUs into colossal, cloud-grade supercomputers, enabling training and inference at scales once thought impractical. Taken together, they form a potent combination that keeps pushing models bigger, faster, and more capable.

The piece doesn’t sugarcoat tradeoffs. The three levers are powerful, but not cost-free: more compute means more energy, cooling, and hardware procurement. Yet the newsletter argues the "wall" many predicted isn’t arriving soon. In other words, the industry’s punchy growth isn’t a mirage of hype; it’s being underwritten by real hardware and software progress that compounds over time. It’s a reminder that the AI boom isn’t a single leap but a sequence of improvements that unlock new capabilities faster than expectations.

The article also leans on a memorable analogy from a different frontier: astro turf. Synthetic turf exploded from 7 million square meters installed in 2001 to 79 million by 2024, a pace that’s hard to ignore and hard to regulate. The paradox is sharp in tech too: progress is visible and seductive, even as questions about environmental, energy, and supply-chain costs linger. If the turf story exposes how industry narratives can outpace scrutiny, the AI compute story cautions that growth, while real, comes with externalities that won’t vanish with the next model release. The underlying message: the “exponential” pace is traceable to concrete hardware and architectural shifts, not magic.

For practitioners building and shipping products today, the implications are tangible. First, expect access to larger, more capable foundation models not just through clever prompts but via underlying hardware ecosystems that keep scaling—cloud GPU pools knit together by fast interconnects and smarter memory hierarchies. This lowers barriers to experimentation: you can test bigger architectures or more ambitious fine-tuning without a wholesale rebuild of your compute strategy. Second, energy and cooling costs remain a practical constraint. The promise of bigger models must be weighed against real-world TCO, especially for startups and PMs budgeting cloud spend. Third, efficiency matters beyond raw compute: model parallelism, memory bandwidth optimization, and data handling pipelines will separate winners from the rest. In other words, you can’t rely on hardware alone to deliver value; software efficiency and deployment discipline matter as much as ever.

Analogy helps crystallize it: think of AI compute as a turbine powered by three blades—faster calculators, bigger memory, and GPU-coiled clusters. Each blade spins up the next, and together they turn a modest drizzle of improvements into a downstream deluge of capability. If one blade dulls, growth slows; if two sharpen, you accelerate; if all three hum in harmony, the system can reach scales that feel almost magical to teams without access to gigantic data centers.

Still, there are failure modes to watch. If energy prices spike, or supply chains buckle for specialized chips, the pace could stall even with perpetual architectural gains. Overfitting to hardware trends without rigorous efficiency research can yield brittle deployments. And amid rapid expansion, robust evaluation, responsible scaling, and transparent benchmarks become even more critical.

What this means for products shipping this quarter is clear: don’t rely on a single hardware improvement to close your roadmap gaps. Plan for scalable inference with scalable memory systems, invest in model optimization, and build in observability to catch efficiency bottlenecks early. The AI revolution isn’t over, but it isn’t a free ride either—it's a sophisticated dance between hardware, software, and prudent product discipline.

Sources

Newsletter

The Robotics Briefing

Weekly intelligence on automation, regulation, and investment trends - crafted for operators, researchers, and policy leaders.

No spam. Unsubscribe anytime. Read our privacy policy for details.