AI's Wall-Free Future: Exponential Compute

By Alexander Cole

Image / technologyreview.com

AI's growth curve has no ceiling—it's a compute avalanche.

Mustafa Suleyman argues the skeptics may be waiting for a wall, but frontier AI keeps marching because the needs and economics of data and compute scale in tandem. Since 2010, the amount of training data funneled into ambitious models has exploded, and the compute behind the largest systems has vaulted by a factor that looks almost inconceivable in ordinary planning. Suleyman puts it starkly: from roughly 10^14 flops for early systems to over 10^26 flops today. That 1,000,000,000,000-fold expansion isn’t a one-off spike; it’s the new baseline of progress as teams stitch together bigger datasets, faster hardware, and smarter software.

To readers in product teams and engineering shops, the logic is provocative: you don’t hit a wall when the levers of data and compute move almost in lockstep. The era Suleyman describes rests on an ambient assumption—data is not a fixed asset but a renewable resource of scale, and compute isn’t a bottleneck so much as a continuously improving substrate. The consequence: frontier models can be trained, fine-tuned, and iterated at speeds and costs that encourage longer planning horizons for product roadmaps. In practice, that means more ambitious capabilities reaching production, more experimentation cycles, and a higher bar for what counts as “good enough” in a moving target.

But the implications come with real caveats. First, energy and hardware supply chains matter as much as the models themselves—doubling the scale often means doubling the risk of outages, chip shortages, or inflated bill lines. Second, the quality and provenance of data continue to govern outcomes far beyond raw volume; models trained on broader data still need alignment, safety, and bias mitigation integrated into the training loop. Third, efficiency matters as much as capacity. If tomorrow’s gains come from smarter architectures and better software tooling (e.g., parallelism, sparsity, and model sharding), teams that optimize the full stack stand to outpace those chasing pure raw scale.

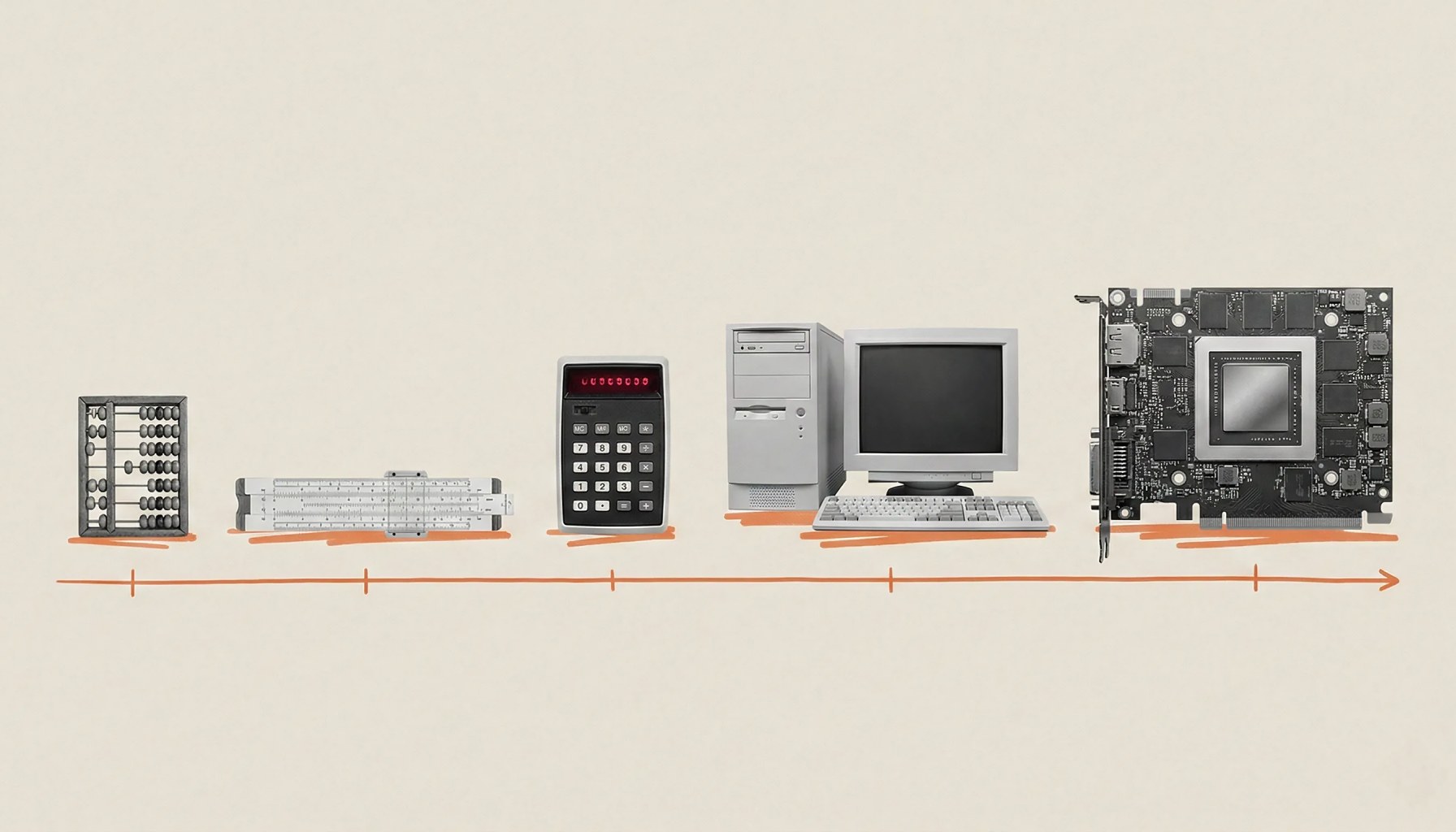

A vivid way to picture Suleyman’s thesis: think of AI training as a room full of people with calculators. For years, adding more hands only sometimes sped things up because the numbers didn’t flow fast enough. The current wave is different—the room itself is getting better calculators, faster data streams, and smarter ways to divide labor. The result isn’t just “bigger models” but models trained with a more efficient relay of data through hardware and software, letting teams iterate without crippling costs or timelines.

For practitioners, two to four concrete takeaways stand out. First, compute budgets will dominate until the next breakthrough—plan product timelines with multi-quarter training loops in mind, and secure scalable cloud or dedicated hardware contracts early. Second, data strategy remains critical: quality, labeling, and curation can outsize marginal gains from merely increasing volume; invest in data tooling and governance. Third, efficiency isn’t optional; expect to optimize architectures, distribution, and training pipelines, because the fastest path to productization often lies in smarter engineering, not simply bigger GPUs. Fourth, watch for environmental and supply-chain constraints that could throttle even a well-funded ramp; diversify suppliers, monitor energy costs, and build contingency plans.

In the short term, what this means for products shipping this quarter is nuanced: you may see capabilities improve not just because someone trained a bigger model, but because a more efficient pipeline yields faster iteration and cheaper experiments. The trend Suleyman highlights—an exponential ramp in compute and data—suggests momentum will continue to reward teams who pair scale with discipline: governance, safety, and cost control alongside raw capability.

Sources

Newsletter

The Robotics Briefing

Weekly intelligence on automation, regulation, and investment trends - crafted for operators, researchers, and policy leaders.

No spam. Unsubscribe anytime. Read our privacy policy for details.